Overview

As an AWS Advanced Partner dedicated to the Financial Services Industry, Vertical Relevance knows that many organizations in this industry are at an important crossroads in their journey to the public cloud as they try to balance the appetite for innovation with nonnegotiable security requirements.

Vertical Relevance is rising to this challenge with exciting solutions that dare to achieve both innovation and security.

In this post, we will review the Control Broker module from our Control Foundations framework, then take a closer look at how one particular component, the Control Broker Evaluation Engine, can be a useful tool for enterprises facing the security-innovation dilemma.

Problem Statement

Under the AWS Shared Responsibility Model, large organizations are responsible for the secure configuration of the resources they provision from 200+ AWS services across a global context. As security teams try to keep track of the evolving configuration patterns implemented by each application team, here are some of the most pressing issues they are facing.

| Problem | Details |

|---|---|

| Struggling to maintain compliance for an increasing number of expansively configurable resources | AWS Services are highly customizable, making them flexible building blocks for a variety of use cases. Our Financial Services clients have specific and demanding regulatory requirements for their applications and their data. This means that the AWS Services that they employ must be configured to meet both their business and security requirements. |

| Unable to prevent non-compliant resources from being deployed | If non-compliant AWS resources are permitted to be provisioned to the enterprise cloud environment, this means that operational and security standards are not being enforced. This can jeopardize data integrity and network security, leading to negative business outcomes. |

| Slow development process for engineers due to bottlenecks in manual security reviews | Existing controls put the Security team in charge of manually approving Application teams’ Infrastructure as Code (IaC) templates before resources can be deployed to the environment. Due to the manual nature of these processes, they are both time-intensive and error-prone. Consequently, Application teams’ demand for cloud infrastructure often outpaces the ability of the Security team to review and approve them. This tight temporal coupling impedes development velocity. |

For Financial Services organizations facing regulatory scrutiny, automated controls are clearly needed.

Control Broker Evaluation Engine+

Background

Vertical Relevance’s Control Foundations framework uses an automation-first approach to address the challenges we’ve outlined above. It empowers Financial Services organizations to systematically define their security policy and continuously enforce it using the Preventative and Detective Controls of the Control Broker.

As we establish the scope of this post, it’s important to note that the Control Broker is designed to enforce security policy in general, and can be adapted to a variety of use cases across Prevention and Detection, including different Policy as Code frameworks and different Infrastructure as Code templating languages. However, in this post, we focus on Preventative Controls. And while our example use case integrates the Control Broker into a DevOps pipeline, it is important to remember that the Control Broker can be invoked from multiple locations in a variety of ways, as detailed in Control Foundations.

This post will provide a deep dive into the Evaluation Engine component of the Control Broker. At a high level, this Evaluation Engine leverages a serverless design to quickly and automatically retrieve infrastructure configuration files from a DevOps pipeline, evaluate the configuration’s compliance based on the Control Broker’s Policy as Code library, and either allow or deny the deployment of the resources based on the compliance status.

Components

- Infrastructure as Code (IaC) – As we’ve seen, infrastructure configuration can have many permutations outpacing the capacity of manual AWS Console-based deployment, or even traditional imperative scripting methods. For reliability and repeatability, enterprises are adopting declarative templates like AWS CloudFormation and HashiCorp’s Terraform to define cloud infrastructure, and versioning and testing those templates as they would code in a traditional software development lifecycle. As we will see, IaC serves as the origin of this evaluation process.

- Policy as Code (PaC) – PaC allows for the automated evaluation of any arbitrary data source by a set of policies that govern the acceptable forms that the source data can take. PaC can be used to enforce security policies through mandatory and automated review of IaC as part of the process of provisioning resources. A mature enterprise security system utilizing PaC can achieve never-before-seen levels of control over what cloud infrastructure is permitted to be provisioned in its enterprise environment.

- AWS CodePipeline – CodePipeline is part of the Developer Tools on AWS, a suite of services that provide CI/CD functionality with native integrations to other AWS Services. This solution leverages these integrations to connect repositories containing IaC with the services needed to evaluate it.

- AWS Lambda – The AWS Lambda service offers compute in a variety of provided runtimes in such a highly scalable and efficient manner that it abstracts away the details of server management to the point that the applications running on it can be thought of as ‘serverless’. Lambda will serve as the core compute component of this solution.

- AWS Step Functions – As one navigates the ecosystem of AWS Services, it can be helpful to have a tool to orchestrate a series of actions per the logic defined in a state machine. AWS Step Functions is serverless state machine capable of managing the logical progression of tasks from state to state, providing native integrations to other AWS services along the way. This solution leverages these capabilities to orchestrate the required API calls and parse their responses per our evaluation logic.

- Amazon DynamoDB – As a serverless, low-latency NoSQL database, DynamoDB has become an essential tool for cloud-native applications and workflows. This solution makes use of it to document the results of the evaluation, as well as to manage the internal state of the Step Function state machine.

- Amazon EventBridge – One can think of EventBridge events as neurons firing within your AWS cloud brain. AWS Services emit default events to announce changes to their state, and applications can push custom events as they see fit. These events propagate via one or more event buses and consumers listening for events can respond accordingly. This solution lays the foundation for operational insights into the evaluation process by emitting events.

Implementation Details

Overview of example code

In our example use case, a Security team has implemented an Evaluation Pipeline to evaluate the IaC proposed by an Application Team.

This Application Team is developing an Example App using the AWS Cloud Development Kit (CDK). CDK enables developers to define the infrastructure required by their application in an imperative programming language that they are familiar with. A CDK application is ultimately compiled to a CloudFormation template, so that could serve as an alternative entry point.

The Evaluation Pipeline infrastructure is itself defined by a CDK application, which we will refer to as the Root Evaluation Pipeline CDK Application. It is hosted on the Vertical Relevance GitHub in this repository. Let’s take a peek at its structure.

Since this example is designed to stand up in a single deployment, you’ll notice that the supplementary_files directory contains two directories that would normally be owned by separate teams in separate repositories.

We have a simple, arbitrary Example App to be evaluated against simple, arbitrary OPA Policies. The OPA Policies are initialized from this directory to S3. To update the OPA Policies, you can change the local files and then redeploy.

The Application Team’s Example App is hosted in this directory. Upon deployment, the Root CDK Application initializes an AWS CodeCommit repository with this code and outputs the link to clone the CodeCommit repo using SSH. To update the Example App, clone the repository and then commit your changes.

Source & Synth

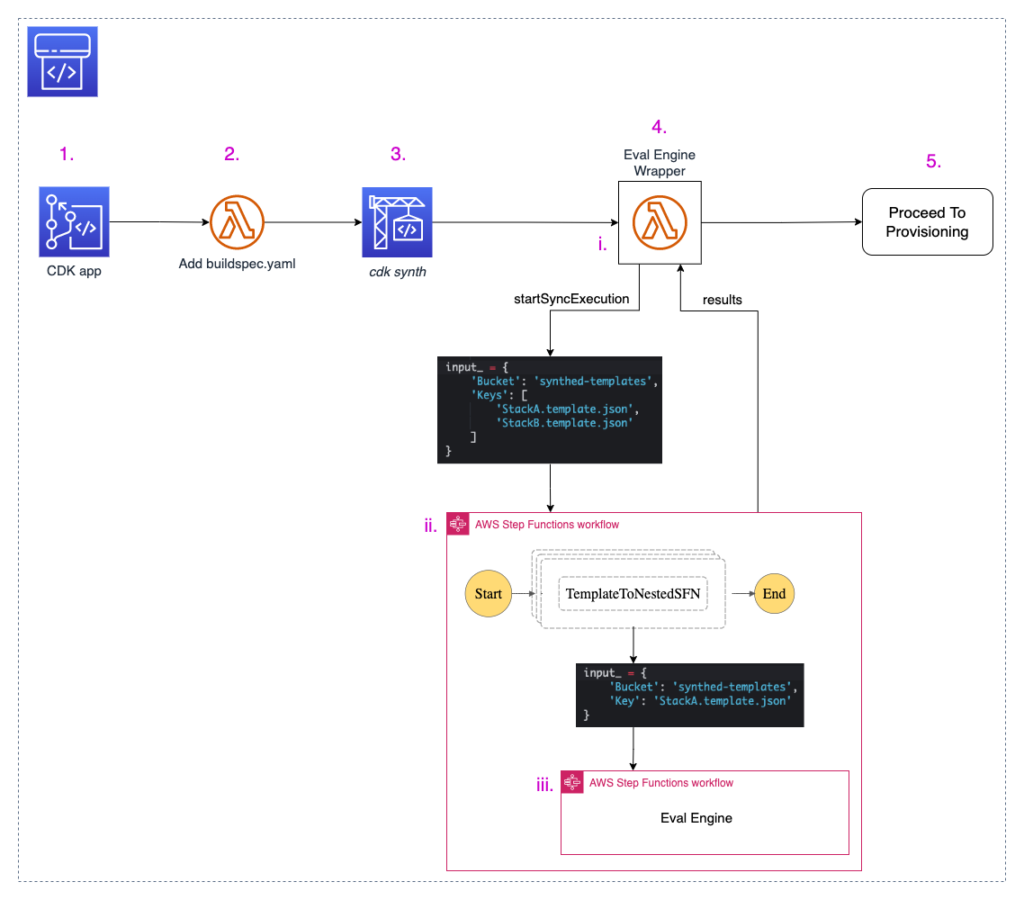

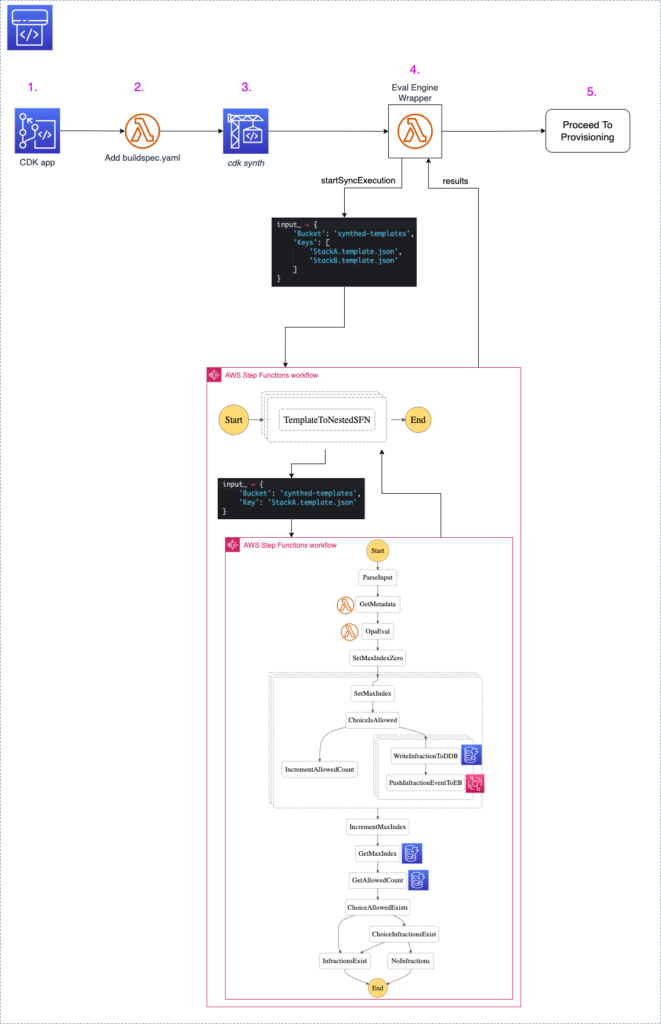

Now that we’ve taken a tour of the Root CDK Application, let’s walk through the Evaluation Pipeline that it creates. First, we’ll examine the source and build stages, #1-3 in the following diagram.

It all begins with the version control repository hosting the Application’s Team Example App. The Application Team is the end-user that we want to enable to build compliant cloud resources. By establishing a specific branch of that repository as the origin of the evaluation process, we enable the Application Team to focus on their application and leverage that direct integration with the security review process.

The source of the Evaluation Pipeline is a CodeCommit repository (1). The CodePipeline pipeline is configured to listen for events from CodeCommit, so that when the CDK application team pushes code to the target branch, that code instantiates an execution of the evaluation pipeline.

Next, we add a buildspec.yaml file to the CodePipeline artifact (2). From the perspective of the application team, this is an implementation detail, so it should not be hosted in the Application Team‘s CDK source repository.

The source application and buildspec.yaml are passed to the AWS CodeBuild stage (3) to run the cdk-synth command. This synthesizes the CDK app from the imperative language it was written in to the common denominator declarative template of CloudFormation. This command produces one or more CloudFormation template files.

Consider a simplified example of the cfn.template.json file.

Here, we declare five resources across two AWS Services: two SQS Queues, two SNS Topics, and an SNS Subscription. This IaC document serves as the source-of-truth for the resources that the Application Team wishes to provision, including the parameters that determine their configuration.

Control Broker Evaluation Engine

Wrapper

After completing the Source and Build stages, the pipeline has at least one cfn.template.json ready for evaluation. The pipeline proceeds to begin the actual evaluation.

The Evaluation Engine is built on two serverless AWS Services: AWS Lambda and AWS Step Functions. It consists of three components: a Lambda function Wrapper, an Outer Step Function and an Inner Step Function. The Wrapper serves as the intermediary between the root pipeline and the evaluation process itself.

The CloudFormation templates generated in CodeBuild (3) are passed to the Wrapper (4) in the form of an input artifact. The Wrapper passes the S3 paths of these templates to the Outer Step Function by calling the startSyncExecution API. As the name indicates, this is a synchronous operation. The Wrapper waits for the Outer Step Function to respond with the results of the OPA evaluation.

If the proposed IaC fails to satisfy the security or operational requirements defined by the OPA policies, the Wrapper calls the putJobFailureResult API to notify CodePipeline that the EvalEngine stage of the pipeline has failed. This causes the pipeline itself to fail, preventing it from continuing to Proceed to Provisioning (5).

If the Eval Engine does not identify noncompliant resources, then the Eval Engine wrapper calls the putJobSuccessResult API to notify CodePipeline that the EvalEngine stage has succeeded, allowing the pipeline to continue to Proceed to Provisioning (5).

This Proceed to Provisioning step will vary by organization. Some environments may proceed directly to CodeDeploy to deploy the infrastructure via CloudFormation. Others may pause for a manual review as the organization matures towards full automation.

Multiple Templates

As we identified earlier, the cdk-synth command generates at least one *.template.json file. CDK apps that declare multiple stacks or utilize nested stack will generate multiple *.template.json files. Our solution aims to process each of them simultaneously, and reject the entire proposed IaC if any stack contains any non-compliant resources.

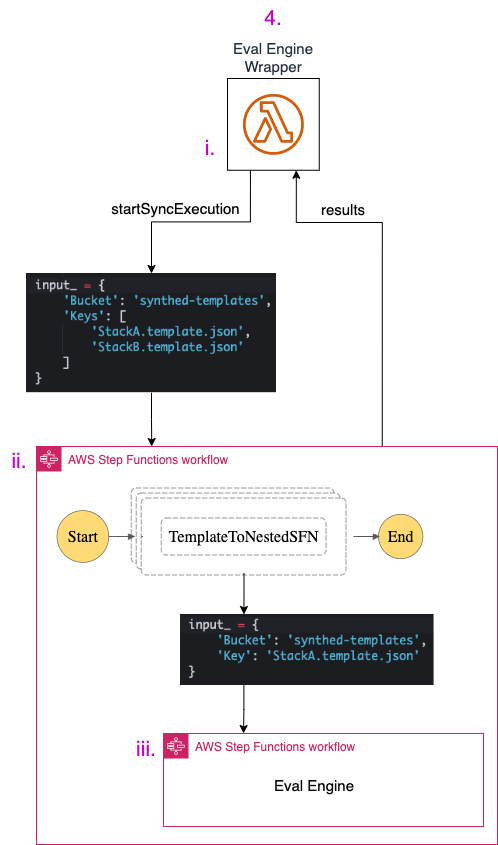

The following diagram zooms in on stage (4) of the root pipeline we introduced above. It tracks the workflow from the Wrapper (i) to the Outer Step Function (ii) to the Inner Step Function (iii) and back.

The Outer Step Function receives as input the S3 paths to least one template. It enters a Map State (more on this later) to iterate over each template in parallel. For each template, it calls the startSyncExecution API and passes the path to the template as input to the inner Step Function.

The Outer Step Functions operates as the intermediary between the Wrapper and at least one parallel execution of the Inner Step Function. It starts a synchronous operation, waits for all the responses, then relays them back to the Wrapper.

Inner Step Function

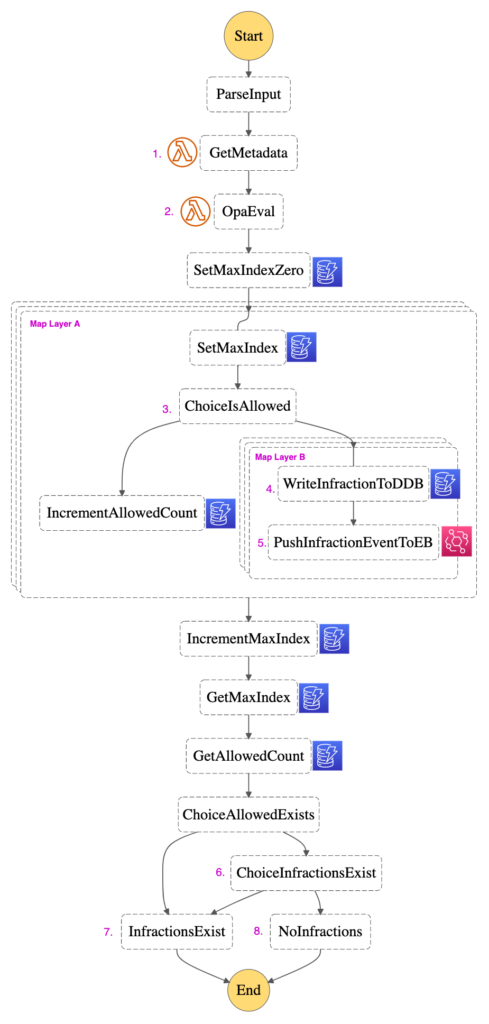

Let’s take a look inside at the core of the Evaluation Engine. The following diagram is from the AWS Step Functions console. Each rectangle represents a State in the AWS Step Functions state machine. The Step Function orchestrates a series of tasks according to the logic we have defined. Overall, it takes a single input—the S3 path to a cfn.template.json file—and answers a single question—is this IaC compliant? Take a look at the diagram, then we’ll walk through how we answer that question.

1 – Metadata

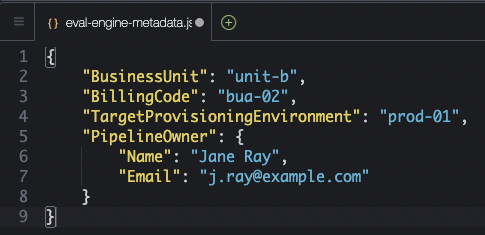

Let’s begin with GetMetadata (1). When defining the ControlBrokerEvalEngineStack in the Root CDK Application, the Security team defines a metadata file that details the ownership of the pipeline and its context within the enterprise. Consider the following the eval-engine-metadata.json file, which the root Eval Engine CDK application deploys to S3.

As we start the evaluation process, we use GetMetadata (1) to retrieve this metadata file from S3 and return the result to the Step Function, so that we can enrich the evaluation results going forward.

2 – Evaluation

OpaEval (2) constitutes the core compute component of the Evaluation Engine. Remember that the input for our Inner Step function is the bucket and key values for the evaluation of a single CFN template. The Step Function passes these values to the OpaEval Lambda function, which uses them to retrieve the cfn.template.json from S3. This will serve as the input for the opa eval command: the JSON document subject to evaluation.

The rules by which it will be evaluated are defined by the example OPA Policies we drew your attention to earlier. CDK deploys these policies to S3. When we define what event our Step Function invokes the OpaEval Lambda function with, we pass in the name of the OPA policies bucket here.

Once the OpaEval Lambda function has retrieved the two main arguments required by the opa eval command—the JSON to be evaluated and the OPA Policies that govern the evaluation—it is ready to execute the command. Here we use a Python subprocess to invoke the opa eval command against the OPA binary that we included as part of our Lambda deployment package.

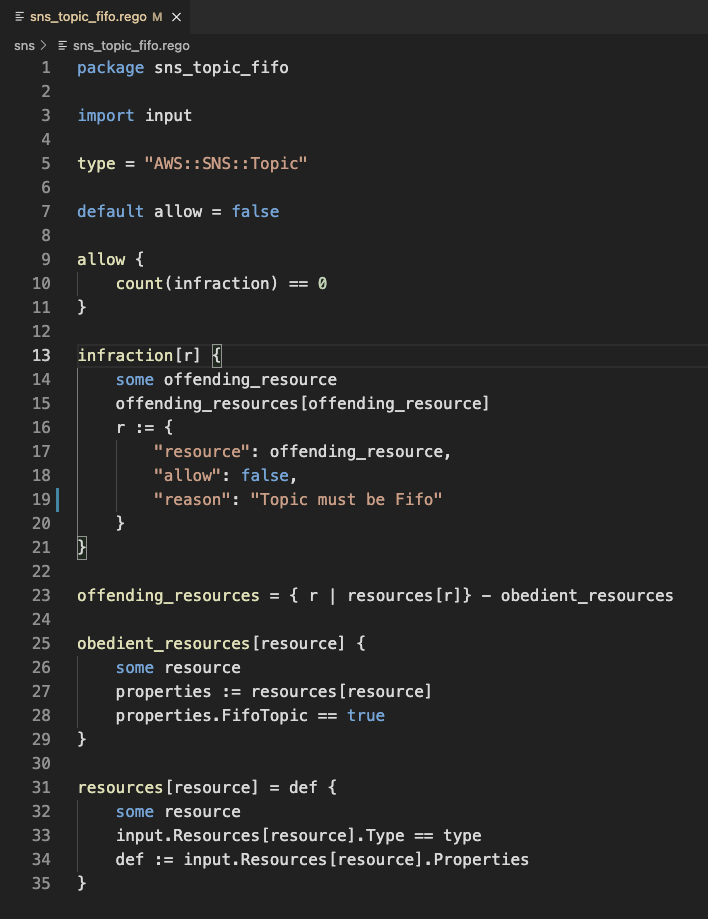

Once the command is executed, the stdout is returned to the Lambda function. To understand the result of the opa eval command, we first must understand the structure of the OPA Policies that define the evaluation process. Let’s take a look at an example OPA policy

Pay attention to the three keys that will form the response from this particular OPA Policy: ‘resource’, ’allow’, and ‘reason’. We will see these same keys referenced again later on.

This architecture chooses to use a modular, single-purpose Lambda functions to get this result, and then leverages the functionality of AWS Step Functions to parse it. The OpaEval Lambda simply returns to the Step Function a list of every item returned by opa eval. We will call this list the EvalResults.

AWS Step Functions has the ability to perform the same operation on every item in a list in parallel using a Map State. This allows us to chart a journey for each item in that list—in our case we want to process each item in EvalResults.

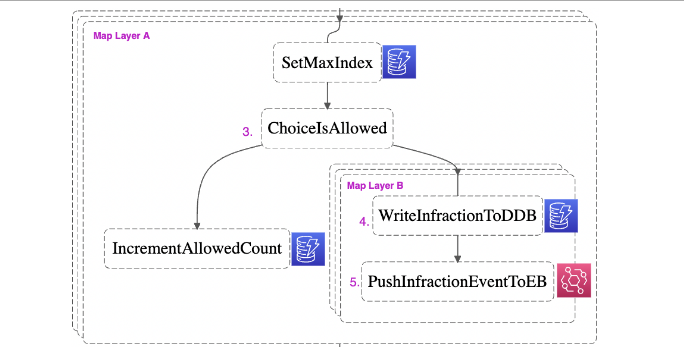

This brings us to Map Layer A in our diagram. Notice how the three layers indicate that the operations visible on the top layer are being simultaneously1 executed on every item in the input array.

The first thing we want to do on each EvalResult is to determine if it is an infraction or not by using a Choice State. At ChoiceIsAllowed (3), if an EvalResult contains a list of infractions, it is directed to infraction processing. This infraction processing is designed to maximize observability and flexibility for downstream consumption.

3 – A database of infractions

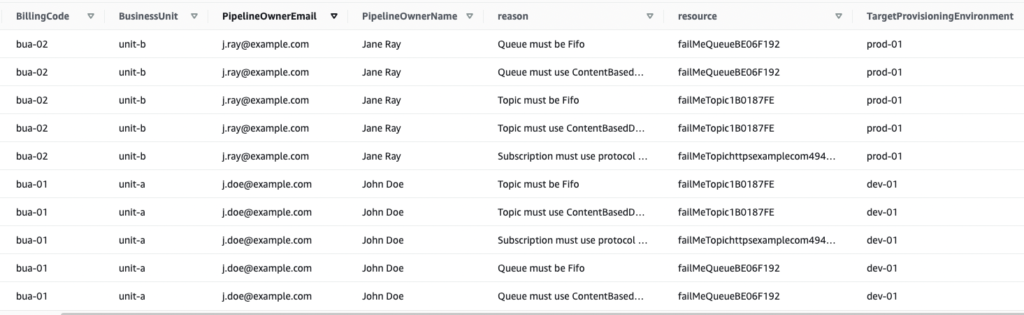

To process this list of infractions, we enter another, nested Map State: Map Layer B. Recall that the operations visible within Map Layer B will be performed on each infraction.

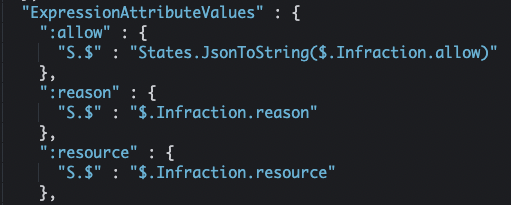

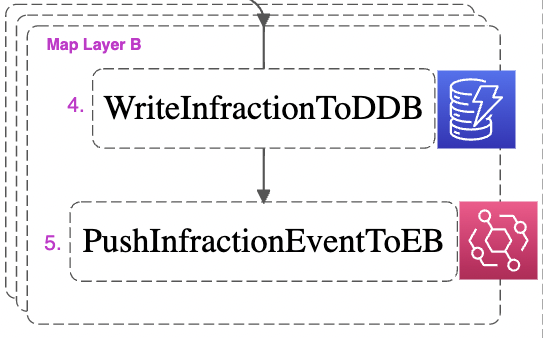

First, we write the infraction to DynamoDB (4). As you look at the following snippet of the Amazon States Language code that invokes the UpdateItem API, recall the three keys we saw in the OPA policy above: ‘resource’, ’allow’, and ‘reason’.

Notice the tight coupling between the structure of the OPA policy and the logic used to process the results. Keep this in mind as you consider the structure of your OPA policies and the infrastructure used to evaluate and track their responses.

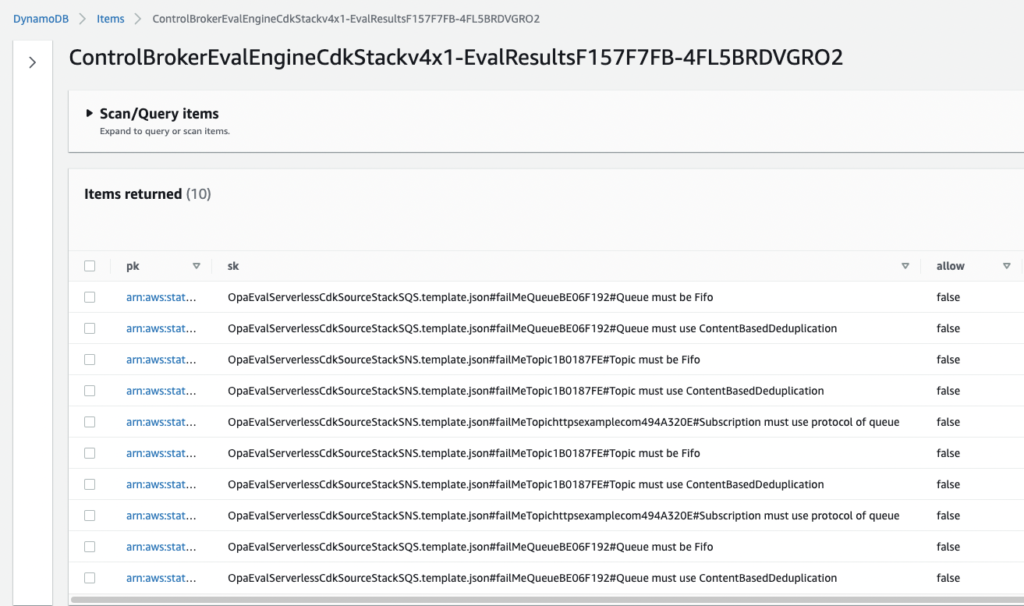

Below are screenshots from the DynamoDB console showing the Eval Results table. For legibility, the metadata fields for the ten items are displayed separately below.

Here, we can see how the metadata retrieved in GetMetadata(1) is tracked through the Step Function to enrich each infraction with context as it’s written to DynamoDB. The primary key, or ‘pk’ (truncated above) is a concatenation of the ExecutionId of the Outer and Inner Step Functions. The former serves as a UUID for each execution of the overall Eval Engine pipeline, a helpful access pattern within the single-table design of this NoSQL database.

4 – Downstream consumability

Having completed WriteInfractionToDDB (4), we continue through Map Layer B. Having written the infraction to DynamoDB, there is one last step to perform to achieve our goals of maximum observability and simple downstream consumption: pushing the infraction to an AWS EventBridge event bus as an event (5).

Take note that just as in WriteInfractionToDDB (4), the following Amazon States Language code defining the PutEvents operation is coupled to the structure of the OPA Policies.

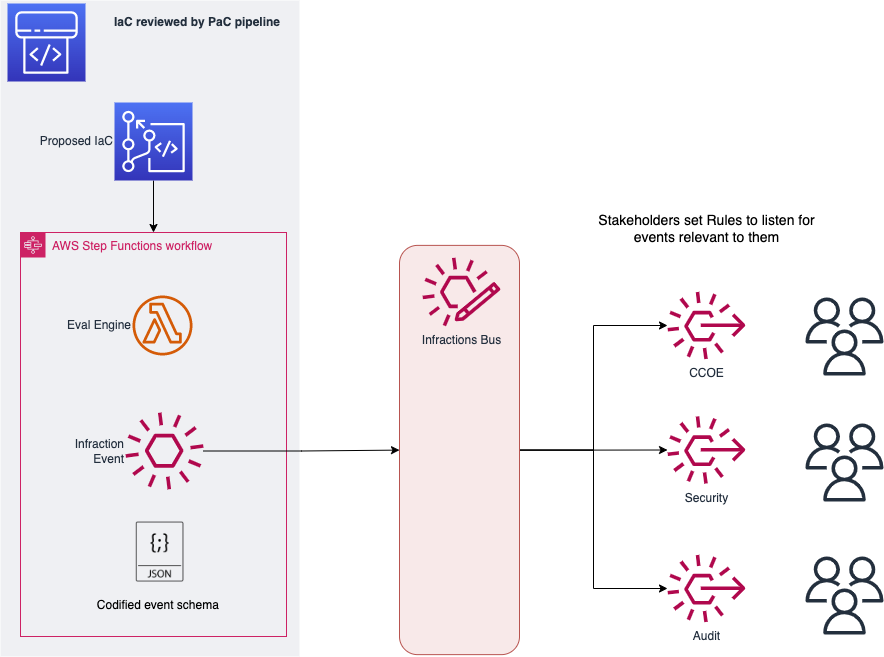

Once that infraction is pushed to the event bus as an event, it is available to any number2 of downstream consumers. Consider the following example of how infraction events might be consumed.

Stakeholders who support and oversee the Application teams’ infrastructure can set EventBridge rules to listen for all events, or filter to consume only specific events of interest. Cloud Center of Excellence (CCOE) teams could identify commonly misconfigured resources and provide the application teams with appropriate templates and resources. Security teams could monitor this as they iterate on the security policies themselves.

A particularly proactive security team could even listen for a list of known high-risk infractions, such as a proposal to provision a public S3 buckets. Even though the infraction indicates that this resource was prevented from provisioning, examining the provided metadata and performing a follow-up might be appropriate. EventBridge rules can target SNS topics for notifications, or even Lambda functions that interact with the broader enterprise information stream and 3rd-party tools.

5 – The Inner Step Function’s denouement

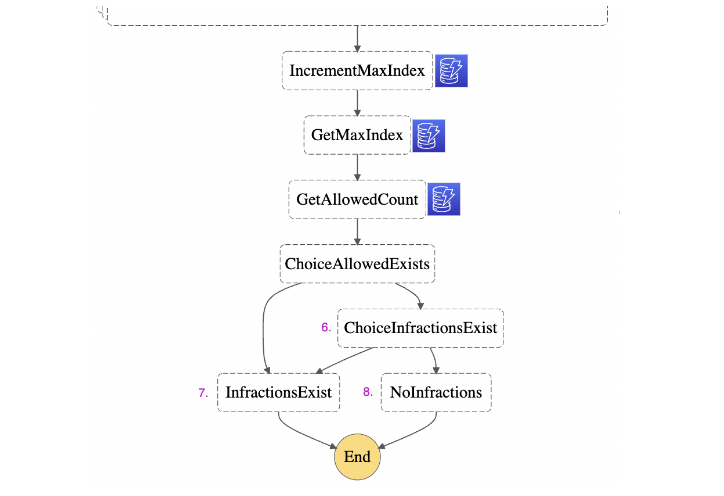

In this scatter-gather pattern, exiting Map Layer B & Map Layer A means transitioning from the scatter portion to the gather portion. Let’s examine the final steps of our Inner Step Function.

We want to implement a deny-by-default logic. The Eval Engine should approve the IaC only if it can explicitly confirm that every EvalResult had an ‘allow’ value of True. Since we’re orchestrating this logic within the Step Function, we’ll use the DynamoDB table to manage the state using two counters. The first, MaxIndex, counts the total number of EvalResults by accessing the $$.Map.Index element of the Context Object within Map Layer A. The second counter, AllowedCount, tallies the number of EvalResults that do not contain any infractions, which per the structure of our example OPA policies, indicates an ‘allow’ value of True.

The logic in ChoiceInfractionsExist (6) is as follows. If the AllowedCount is equal to the MaxIndex, then every item in EvalResults is Allowed. This explicitly confirms that no infractions were found, so the Step Function proceeds to the Succeed State NoInfractions (8). If infractions were found, AllowedCount would be less than MaxIndex. Per our deny-by-default logic, if this, or any other outcome occurs, then the Step Function proceeds to the Fail State InfractionsExist (7).

We use these states to dictate the Status response field described in the StartSyncExecution API. The Fail State InfractionsExist (7) indicates a Status of ‘FAILED’. Conversely, the Succeed State NoInfractions (8) indicates a Status of ‘SUCCEEDED’.

The Outer Step Function will return this Status for each nested Inner Step Function to the Eval Engine Wrapper. It’s the Wrapper that contains the logic to determine a PipelineProceeds Boolean from a list of responses generated by each Inner Step Function. That logic dictates that PipelineProceeds should be False if any of the generated *.template.json files contain any noncompliant resources. That is to say, if any of the Inner Step Functions reach the InfractionsExist (7) Fail State, then the entire Evaluation Engine pipeline is halted. Conversely, in order for the proposed IaC to receive approval to proceed to provisioning, all of the generated *.template.json files must reach the NoInfractions (8) Succeed State upon evaluation.

That closes the loop! Below is the final architecture of the solution.

Solution Mapping

As we’ve discussed, Security Teams that can codify their security requirements as code are able to automatically enforce those requirements, providing them with several advantages. They can more effectively interface with the Application Teams, providing feedback in a more timely and consistent manner. In our example use case, we saw how a Security Team required proposed IaC to submit to an automated evaluation against the Security Team’s library of OPA Policies.

| Problem | Solution | AWS Services |

| Struggling to maintain compliance for an increasing number of expansively configurable resources | Codifying security policy as code removes the need to repeatedly review and reject IaC on account of the same noncompliant configuration parameter. This elevates your cloud security experts from the role of a traffic judge swamped by repetitive casework to the role of a Senator methodically legislating security policy with the confidence that it will be automatic enforcement. Only then will your security team be able to form a cogent security opinion on the breadth of AWS offerings. | OPA evaluation performed by AWS Lambda as orchestrated by AWS Step Functions. |

| Unable to prevent noncompliant resources from being deployed | Just as a junior developer wouldn’t bring code to their technical lead without running the required suite of unit tests, Application Teams can now be required to have their proposed IaC evaluated against a library of security policies before moving towards the infrastructure provisioning process. Application Teams that settle into this workflow often find they are adding more value to the applications themselves and deploying them with more confidence. This institutionalizes knowledge of security requirements. | CodePipeline is configured to block IaC deemed noncompliant from proceeding to provisioning. |

| Slow development process for engineers due to bottlenecks in manual security reviews | Security practices are given a voice as code. The security team can articulate their vision across their organization in a clear, consistent and agile manner. | CodePipeline manages an automated security review. |

Summary

This comprehensive and efficient Evaluation Engine is cloud-native and serverless, perfect for the modern CI/CD process.

This is the latest example of how Vertical Relevance is a leader in the Policy as Code space. This post outlined how to operationalize PaC with a serverless Evaluation Engine as part of the broader Control Broker solution. Get in touch with us to learn more about the benefits of operationalizing the automated enforcement of security policies.

Related Solutions

This Evaluation Engine brings a serverless perspective to a particular component of the broader Control Foundations framework. The original Control Broker in the Controls Foundation also executes the opa eval command as a Python subprocess, but does so in a CodeBuild container rather than the serverless solution described above.