How a leading worldwide provider of payment technology improves software delivery by providing self-service infrastructure products that are readily available to be consumed by application teams

About the Customer

The customer is one of the world’s largest digital payment solutions providers that supports a variety of payment methods and handles billions of transactions every year across a global scale.

Key Challenge/Problem Statement

The customer is currently in the process of performing a massive mainframe modernization and cloud migration effort with the goal of increasing development efficiency, reducing overall costs, and leveraging the elasticity of the AWS Cloud. The customer has a number of applications that are either being developed within or being migrated to the AWS Cloud and many accounts to which application teams need to deploy to. One of the major pain points they’ve faced is not being able to deploy infrastructure and applications quickly to different accounts on-demand due to challenges in prerequisite setup and configurations. The customer needed a scalable way to produce and share pre-configured infrastructure/application products that have been tested, validated, and approved, across a number of their accounts. To address this challenge, Vertical Relevance was brought in to help architect and develop a cross-account solution that would provide the customer, and their application teams, the ability to deploy these pre-configured products quickly, securely, and through an automated self-service mechanism. This allows Development Teams to create AWS Resources that are compliant and secure on-demand, using Service Catalog.

State of Customer’s Business Prior to Engagement

Multiple manual processes that took over a week to perform would need to take place when an application team wanted to deploy new infrastructure. Oftentimes, required configurations such as infrastructure-related permissions/access, naming/tagging values, and other prerequisites would take some time to accumulate and be configured correctly before a certain piece of infrastructure could be deployed. This new solution aims to eliminate the manual steps in the infrastructure deployment process and increase the speed and feasibility in which application teams can deploy those pieces of infrastructure within a multitude of accounts.

Proposed Solution & Architecture

The solution used a combination of AWS services and third-party services to deliver a framework for developing, validating, and sharing a collection of pre-configured products that have been developed with best practices in mind. For this implementation, Service Catalog essentially acts as the front-end UI for deploying products within a specified account. A centralized hub account is used for the primary location for uploading and storing the collection of products and is where products are shared out to the required consumer accounts. A Jenkins Service Broker was developed to serve as the interface between Jenkins and Service Catalog. The Jenkins broker in this case is a bootstrapping pipeline that will trigger the building of any product.

There are two main components that are essential for the provisioning of these products – Terraform for the Infrastructure as Code and Helm for Kubernetes product/package deployments. Helm is used to maintain the configuration of products and ensure that a set of products, that are required for provisioning a workload, are deployed together as a package.

As part of the provisioning process of a product, a collection of tests written in OPA will be executed against the source code of the product to ensure that the defined configurations are validated as acceptable before deployment can occur.

A collection of global parameters and secrets are used to provide the products with essential configuration information for when they are deployed into any account. Hashicorp Vault and Secrets Manager are used to provide secure storage and rotation of secret values, properties, and credentials that are required for products during and after deployment. Non-sensitive global variables and property values will be stored in the SSM Parameter Store which can be accessed and injected as required Terraform parameters for product deployments.

As part of the orchestration of product deployment using Terraform and Helm, AWS S3 is used to maintain state for deployed products. For this implementation, S3 is essential in providing state file versioning, backups, and replication across regions. Another critical piece of the orchestration of these will be the Jenkins agents deployed into all of the accounts required to host deployed products. These Jenkins agents have the ability to assume IAM permissions (restricted to their local accounts) to deploy these products within the accounts in which they live.

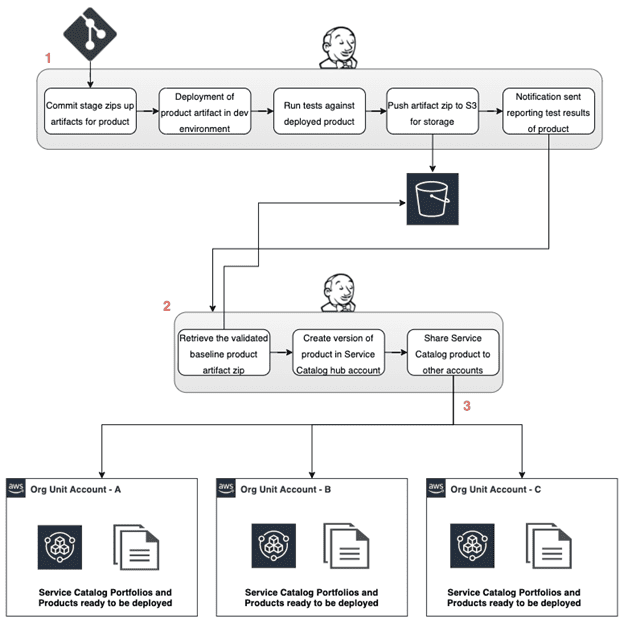

The below two diagrams illustrate, at a high level, both the development process and the deployment process of a product.

- The first main step in this process involves the CI product pipeline. A product developer commits the source code for a product which triggers the product creation process. This includes the following steps:

- Deploying the product into a dev environment with baseline configurations

- Running a suite of tests against the deployed product to ensure proper functionality and standardized compliance requirements are met.

- Creating a zip artifact of the product source code to be pushed into S3 for versioned storage and later retrieval.

- A notification is sent out reporting the test results of the product.

- The second main step in this process involves the CD process and cross account product publishing pipeline. When a product has been validated and approved for use across the organizational units, the following steps occur:

- The CD pipeline retrieves the product artifact zip file from its storage location.

- A version of the product is created within the centralized Service Catalog hub account and is then ready to be shared out across the organization.

- The product is then shared out to and created within the spoke accounts based on product metadata and tag values that help to determine which accounts the product should be shared.

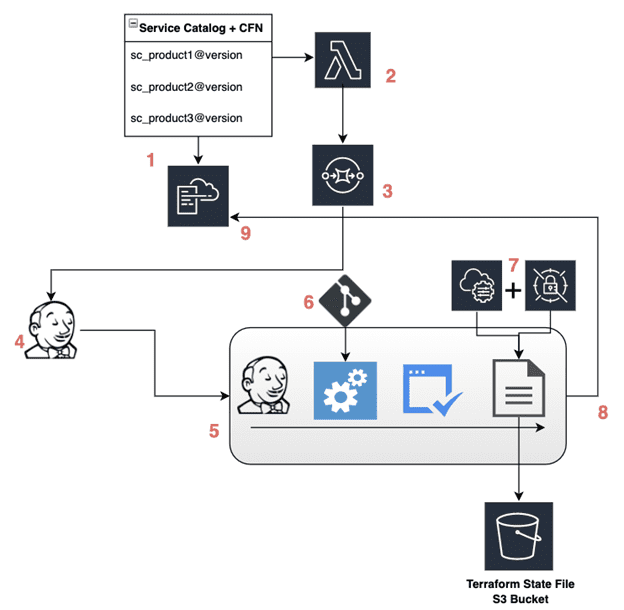

To consume the product, the teams requested and provisioned the product through the Service Catalog UI which then begins the creation of the related product CloudFormation template.

- An AWS Lambda custom resource is called from the CloudFormation template and is used to insert product CloudFormation configuration parameters into a message object.

- The Lambda custom resource then posts the message object into an SQS queue that is received by the Jenkins service Broker.

- The Jenkins service broker uses the message object to call the relevant Jenkins installation and sets parameters for the Jenkins pipeline. It also uses the message object to set response request details in the post build task and rolls back the deployment if the call to Jenkins fails.

- A Jenkins pipeline dedicated to the requested product is created and utilizes a Jenkins service role to deploy the product infrastructure. Stages within this product specific pipeline include the following:

- The pipeline retrieves a configuration file from the relevant application team’s repository that is used to override the baseline product configurations and customize the IaC configuration of the product during provisioning.

- Before the deployment of the product can occur, multiple tests are run against the proposed Terraform infrastructure configuration to ensure compliance, security, and code integrity are acceptable. These tests include the use of a linter and running a suite of OPA policy checks against the proposed infrastructure. When all tests for the proposed infrastructure have passed, the pipeline is allowed to proceed onto deployment.

- The final stages of the pipeline include the deploying the product Terraform IaC and sending out notifications to the appropriate personnel.

- Application team specific configuration files are pulled down from BitBucket and used to override the baseline product configurations and customize the IaC configuration of the product during provisioning.

- Required global variables and secrets are pulled down from the SSM Parameter Store and Secrets Manager, and are injected into the deployment of the product during provisioning.

- As a post-build task, a message is sent back to the originating Service Catalog CloudFormation template signaling that the product has either successfully deployed or failed during deployment.

- This signal back to the CloudFormation template will dictate whether or not the stack is able to reach a

CREATE_COMPLETEstatus.

AWS Services Used

- AWS Infrastructure Scripting – CloudFormation

- AWS Compute Services – EC2, EKS, Lambda

- AWS Storage Services – S3

- AWS Management and Governance Services – Service Catalog, AWS Organizations, SSM

- AWS Security, Identity, Compliance Services – IAM, Key Management Service, Secrets Manager

- AWS Application Integration Services – SNS, SQS

Third-party applications or solutions used

- BitBucket

- Jenkins

- Terraform

- Kubernetes

- Helm

- Python

- OPA (Open Policy Agent)

- Hashicorp Vault

Outcome Metrics

- There are around ~30 developed products that are to be shared across the customer’s AWS Organizational accounts.

- Application teams are now able to request and deploy their required infrastructure much faster than before through automated mechanisms.

- Automated testing, validation, and dozens of policy checks are run against all products before deployment into production to ensure security and compliance requirements are met.

- Process to create AWS Resources went from a request model through a DevOps Team, to on-demand, where the time to create was reduced from over 1 week to minutes.

Summary

Through engaging with Vertical Relevance, the customer was able to increase their developers’ efficiency by increasing the speed in which they are able to deploy AWS infrastructure, all while doing so in a way that is scalable, secure, and remaining with their compliance requirements. Development Teams are able to do this by creating AWS Resources that are tested and approved to Customer’ standards on-demand utilizing Service Catalog. With this new solution being implemented, the customer is able to progress through their mainframe modernization and cloud migration efforts faster than before. With this newly added framework supporting their modernization effort, they will be able to improve upon and develop new applications faster than before, allowing them to bring on a variety of new customers quickly and provide them with more up-to-date products and solutions.