AWS Lambda is introducing a new feature called SnapStart for Java, a capability that delivers up to 10x faster startup performance for latency-sensitive Java functions. Typically, when a function is invoked for the first time and as it scales up to handle traffic, AWS Lambda creates a fresh execution environment. This includes downloading the function code and initializing any external dependencies, such as initializing a framework such as Java Spring Boot. Depending on the size of the code and external dependencies, this initialization process can take several seconds and adds a significant amount of latency.

With the new AWS lambda feature, Lambda SnapStart enabled, the function code is initialized once when a function version is published. AWS Lambda then takes a snapshot of the execution environment, persists the encrypted snapshot, and caches it for low-latency access. When the function is first invoked and scales up, execution will resume from the snapshot instead of going through the entire initialization phase, improving startup latency.

This post will guide you through the deployment of a simple Java 11 application; first without SnapStart enabled and then with SnapStart enabled. We will then run some tests against the application to demonstrate the latency improvements that are achieved with SnapStart.

Overview

To test the new SnapStart feature, we will be deploying a Pet Store application written with the Spring Boot 2 framework. We will be using the Serverless Application Model (SAM) to build and deploy the application into our AWS account. This creates an AWS Lambda Function, an API Gateway, and the necessary roles and permissions to execute API calls against the application.

Prerequisites

The example has the following prerequisites:

- An AWS account. To sign up:

- Create an account. For instructions, see Sign Up For AWS.

- Create an AWS Identity and Access Management (IAM) user. For instructions, see Create IAM User.

- The following software installed on your development machine, or use an AWS Cloud9 environment, which comes with all requirements preinstalled:

- Java Development Kit 11 or higher (for example, Amazon Corretto 11, OpenJDK 11)

- Python version 3.7 or higher

- Apache Maven version 3.8.4 or higher

- Docker version 20.10.12 or higher

- AWS CLI 2.4.27 or higher

- AWS SAM CLI

- Ensure that you have appropriate AWS credentials for interacting with resources in your AWS account

Example Walkthrough

- Clone the project GitHub repository. Change directory to subfolder “snapstart-performance-testing”

- Bash

- Git clone https://github.com/bnusunny/snapstart-performance-testing.git

- cd snapstart-performance-testing

- Bash

- Run ‘sam build’ to build the application

- Bash

- sam build

- A deployment package has now been created in the ‘.aws-sam’ sub-directory.

- Bash

- Deploy the application into your AWS account by using ‘sam deploy – -guided’

- Bash

- sam deploy – – guided

- Once completed, SAM CLI will print out the stack’s outputs, including the API URL that we will be using in our tests

- Bash

Testing the Application

Our goal is to understand how AWS Lambda SnapStart affects the latency of our Lambda Function. To measure this, we will benchmark the cold-start and warm-start latency performance of the function without SnapStart enabled. Once we have our baseline, we will enable SnapStart and rerun our performance tests.

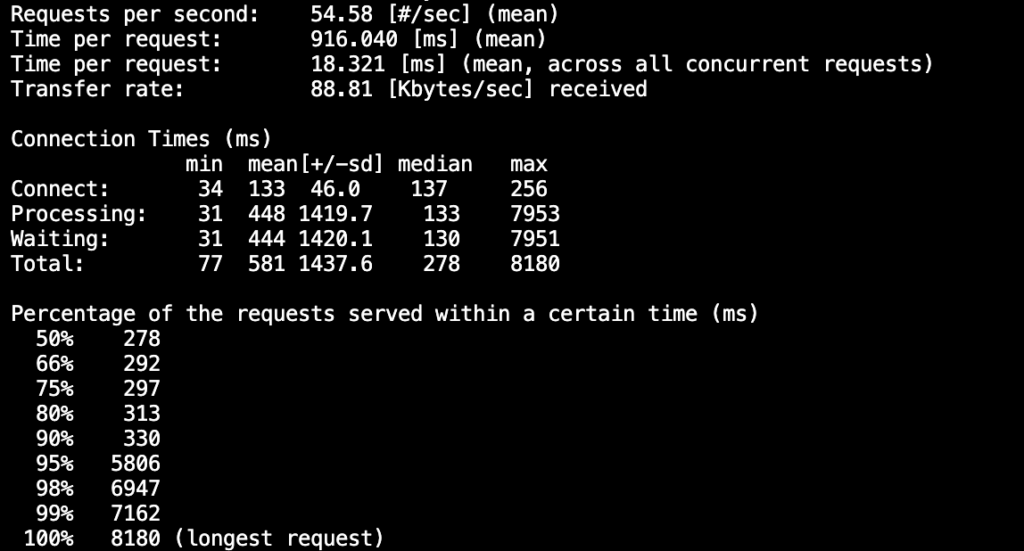

To run our performance tests, we will be using Apache AB, an HTTP server benchmarking tool.

- From the console, run the following command to simulate 1000 requests with 50 concurrent threads. Replace the URL with the API URL from the output of the `sam deploy` that you ran earlier.

- ab -n 1000 -c 50 https://xxxxxxxxx.execute-api.us-east-1.amazonaws.com/Prod/pets

- Once the test completes, view the logs for the function on the AWS Console.

- Lambda -> Monitor -> View Logs in CloudWatch -> View in Logs Insight

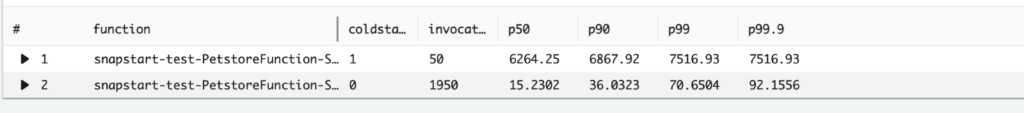

- In the Query Editor, run the following query:

filter @type = “REPORT”

| parse @log/\d+:\/aws\/lambda\/(?<function>.*)/

| parse @message/Restore Duration: (?<restoreDuration>.*) ms/| stats

count(*) as invocations,

pct(@duration+coalesce(@initDuration,0)+coalesce(restoreDuration,0), 50) as p50,

pct(@duration+coalesce(@initDuration,0)+coalesce(restoreDuration,0), 90) as p90,

pct(@duration+coalesce(@initDuration,0)+coalesce(restoreDuration,0), 99) as p99,

pct(@duration+coalesce(@initDuration,0)+coalesce(restoreDuration,0), 99.9) as p99.9

group by function, (ispresent(@initDuration) or ispresent(restoreDuration)) as

coldstart

| sort by coldstart desc

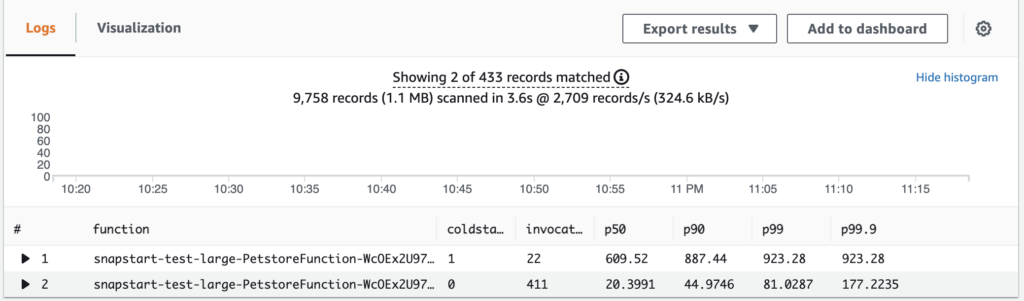

- A chart like below should appear showing the request times for cold-starts and warm-starts

- We can see that during a cold-start the request took more than 6 seconds to complete and in some cases more than 7.5 seconds

- Now lets try with SnapStart enabled. First we need to clean up the Lambda CloudWatch log group

- Lambda -> Monitor -> Metrics -> View Logs in CloudWatch

- Select all log streams and delete

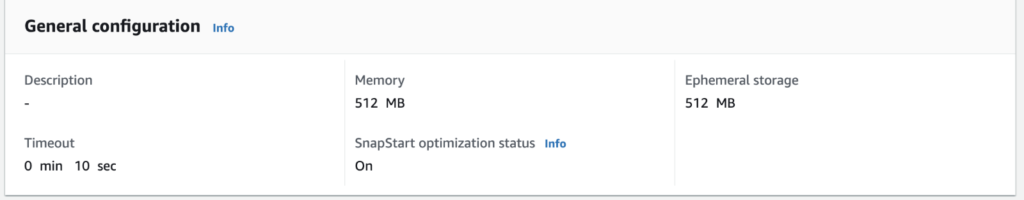

- Test with SnapStart Enabled

- Enable SnapStart on your Lambda

- Go to Configurations -> General configurations -> Edit -> SnapStart -> PublishedVersions

- Publish a new version of the function and wait for the creation to be successful.

- Update API Gateway to use your newly published function version and redeploy the API

- Repeat the test from Step 1

- Run the Query from Step 3 in CloudWatch Insights

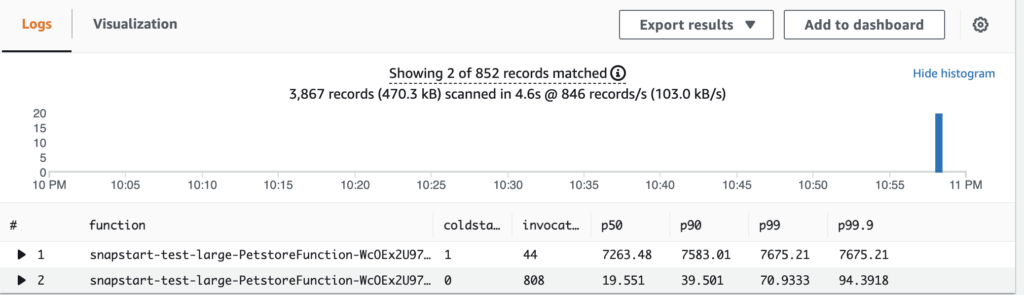

Further Testing

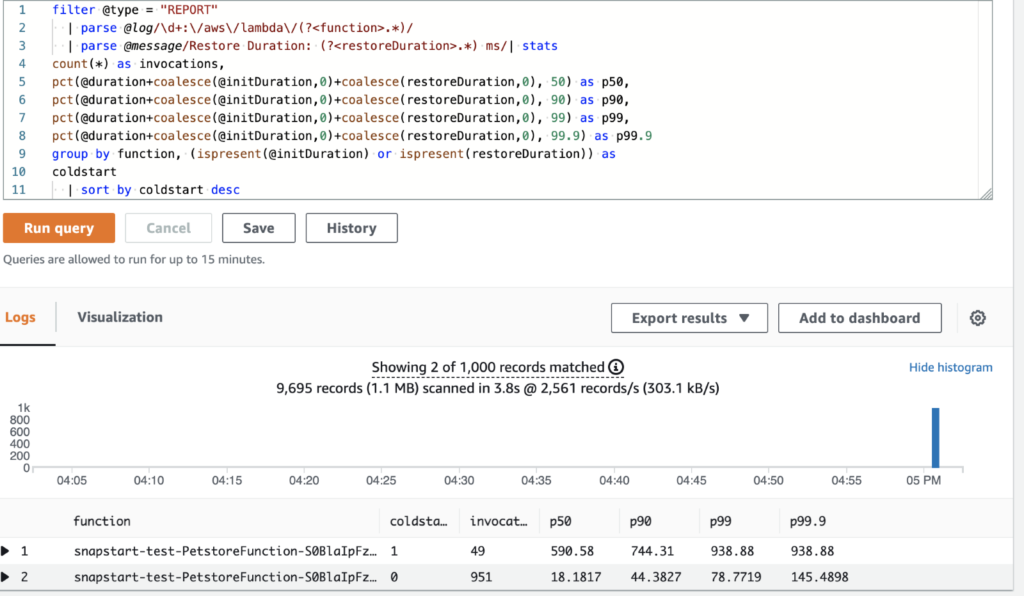

We can further verify the performance gains on SnapStart by deploying our Lambda with a larger deployment package. A few random dependencies were added to the pom.xml file bringing our total deployment package from 17MB to 26 MB. If we rerun the tests in the previous section we get the following results.

- Without SnapStart enabled

- With SnapStart enabled

Even when our deployment package got larger, we still saw an almost 90% decrease in cold-start latency.

Conclusion

In this post, you learned how to deploy a Spring Boot 2 application powered by a Java 11 AWS Lambda Function with SnapStart enabled. We showed how to deploy that application using the Serverless Application Model (SAM) framework.

When it comes to Java 11 functions, a significant amount of execution time is spent on the initialization phase during a cold-start and that amount increases as your deployment package increases. With SnapStart, that lengthy initialization phase happens a singular time when you publish a new version of the function. All subsequent invocations begin execution within a fully initialized execution environment, thus reducing latency and improving the overall performance of your application. Read the full announcement from AWS here: https://aws.amazon.com/blogs/aws/new-accelerate-your-lambda-functions-with-lambda-snapstart/