About the Customer

The Customer is one of the world’s largest investment management companies, providing investors a large selection of low-cost mutual funds, ETFs, personalized advice, and related services. The Customer manages trillions in global assets under management.

Key Challenge/Problem Statement

Since migrating to the AWS cloud over six years ago, the customer has utilized a hybrid-cloud approach. They have been using AWS for data and analytics while still leveraging some databases from their on-premises IBM Mainframe until their current migration efforts are complete. However, the current data access controls in place in their AWS environment are beginning to encounter hard security limits, security and governance best practices are being overlooked, and data consumers are struggling to discover, access, and collaborate within the current data landscape. These issues are not only slowing down the delivery of data and analytics projects but also putting the organization at risk of fines for failing to meet security regulations.

State of Customer’s Business Prior to Engagement

Within the AWS cloud, the customer currently has a decentralized data and analytics approach consisting of a variety of compute and storage solutions that are segregated within each line of business (LoB). Each LoB currently has its own set of AWS accounts spread across Software Data Lifecycle (SDLC) environments (Development, Test, Production). Across all LoB, there are approximately 100 accounts in total.

The table below outlines the quantities and contents of the different AWS accounts across the organization.

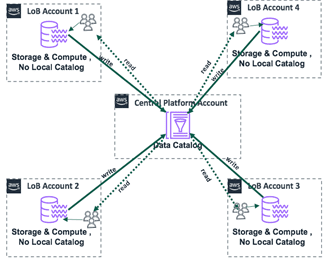

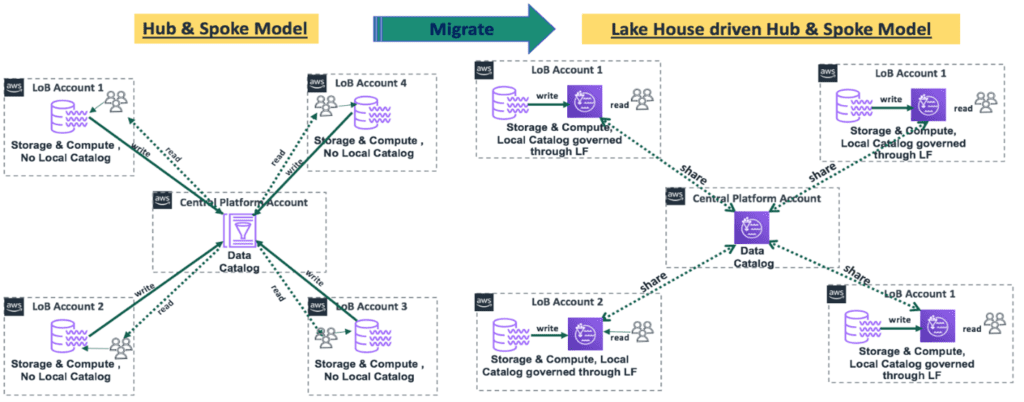

The current approach to manage the interactivity between the data producers, consumers, and owners is a basic hub and spoke model that connects every account to its SDLC’s respective platform account, but only allows for rigid read/write capabilities. The current hub and spoke model is outlined below.

Due to the constraints of this model, teams are experiencing inefficiencies including, but not limited to, long turnaround times when requesting access to data and lack of oversight over data consumption.

Proposed Solution & Architecture

The first step of our assessment was to conduct discovery to understand the client’s current approach, gaps in capabilities, and the roles of all the necessary stakeholders.

During this discovery phase, we learned that the customer was utilizing many of the AWS services that are needed to implement a Lake House solution. However, while many relevant services are present, the current solutions are not designed and configured to function like a Lake House. For instance, although there is a Glue Metadata Store designated to handle centralized logging and monitoring, it is only interfacing with a small subset of log groups and is lacking governance and observability capabilities in its current state. Further, there is currently a severe gap in the capabilities pertaining to governance, data discovery, collaboration, monitoring, observability, and security.

Based on the design of the current solution and the gaps that needed to be addressed, we decided that the best path forward was to redesign the structure of the governance approach, create a data consumer platform, and leverage the existing compute solutions.

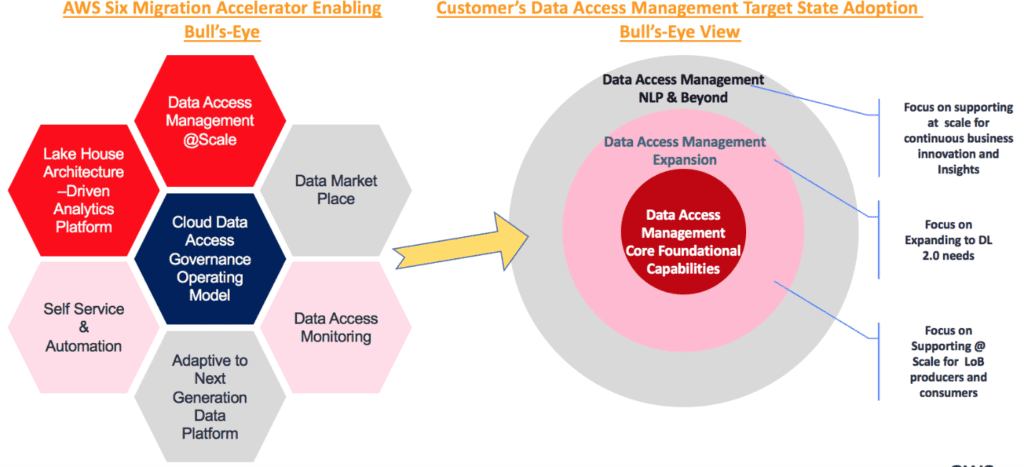

Since the current implementation of the hub and spoke model is very rigid and does not allow for flexible access grants (both inside and outside the account), we recommended that the next iteration relies on Lake Formation’s governance features to provide governance controls to manage access for data consumers that are internal and external to the account. With this approach, the flexibility of governance in Lake Formation will also seamlessly enable security teams to build comprehensive monitoring and observability solutions to further monitor the data access and address regulatory requirements.

To address the gap in security, this recommended approach will allow the customer to leverage their existing Collibra toolset to implement Data Classification and Data Protection strategies that are not possible within their current approach. Collibra will integrate with AWS Glue and AWS Lake Formation to implement field-level tagging, lineage, categorization, and business definitions. These mechanisms will allow the security team to track the flow of data, identify irregularities, and ultimately easily troubleshoot and remediate access issues as soon as they arise.

Finally, with the Core Data Access Management capabilities and Expanded Data Access Management capabilities in place, the final phase of our recommendation addressed the need for Business Innovation and Insights enablement. The goal of this phase is to enable data scientists and data analysts to work as efficiently as possible by providing them with the ability to seamlessly query and analyze the data with tools such as Amazon EMR, Amazon Athena, and Amazon QuickSight.

By implementing the following design, the customer will successfully transform their data and analytics management strategy from several siloes that caused headaches for every team into an efficient, centralized Lake House solution that will allow teams to reap all the benefits of data and analytics in the cloud.

AWS Services Used

- AWS Infrastructure Scripting – CloudFormation

- AWS Compute Services – Lambda, EC2, ECS

- AWS Storage Services – S3, EFS

- AWS Networking Services – VPC, API Gateway

- AWS Database Services – RDS, DynamoDB

- AWS Developer Tools – CodeCommit, CodeBuild, CodeDeploy, CodePipeline

- AWS Management and Governance Services – Organizations, CloudWatch, CloudTrail, Service Catalog, Organizations, Control Tower

- AWS Security, Identity, Compliance Services – IAM, KMS, GuardDuty, Resource Access Manager, Single Sign-On

- AWS Analytic Services – LakeFormation, Athena, Glue, Redshift Spectrum, EMR, Kinesis, QuickSight

- Application Integration – Step Functions, Amazon EventBridge, Amazon MQ, SNS, SQS

- AWS Machine Learning Services – SageMaker, SageMaker Studio

- Access Management – CLI, SDK

Third-party applications or solutions used

- Authentication – SailPoint, LDAP, Okta

- Analytics – Tableau, Splunk

- Catalog – Collibra

- Machine Learning – DataRobot, R-Studio

- Access Management – CLI, SDK, Highlander, Okta

- Coding Base – Java, Python, Ray, PySpark

- Developer Tools – Bitbucket, Banboo

Outcome Metrics

- Analysis of the existing data and analytics landscape yielded a comprehensive list of pain points in the current design

- Provided a recommended design to address the top priority pain points by leveraging existing patterns and solutions where possible

Summary

By engaging with AWS and Vertical Relevance, the Customer was able to decide which incremental “Waved” approach is best aligned with their needs. The Customer now has a plan for completion of successful migration to the next best Lake House AWS architecture state as well as managing risks while delivering business value at each increment.