Self-Service Pipelines

A pipeline is the backbone of a development team’s software release process. Pipelines provide automation, consistency, predictability, and visibility to your software release process. It’s widely accepted that if you’re going to release software, you should be using a pipeline.

A deployment pipeline consists of a variety of stages and actions that orchestrate testing and deployments as a software release moves from commit to production. This may seem straightforward, but the more application teams looking to build deployment pipelines, the more variations you will start to see to the point it becomes a management and governance nightmare. Thus a framework approach should be used to give a general structure of a pipeline, but also enable the application teams to customize it to their needs.

The Pipeline Foundation solution enables development teams to request a pipeline that comes with all of the necessary components, integrations, and configurations for development teams. Development teams are able to request pipelines through self-service while also incorporating compliance and governance.

Pipeline Foundations Blueprint

Skeletons

Skeletons are designed for specific functions and are the building blocks for an organization’s pipeline foundation. Customized pipelines are then built on top of Skeletons for specific use cases (programming language, deployment platform, etc.)

Skeletons can be used for defining required tests and steps which enable an organization to enforce particular validations and controls are run in every pipeline. They have very little customization and heavily rely on the development team’s code repository which enables them to be generic and support multiple use cases.

Components

- CloudFormation – Skeletons are often defined using CloudFormation

- CodeCommit – Stores skeleton code, Skeleton buildspecs, etc.

- CodePipeline – Used to define the structure of the pipeline and orchestrate the progression from stage to stage

- CodeBuild – Used to execute the actual tasks (launching the environments, running the tests, etc.). This is where the tailoring and customization is done

- Service Catalog – Skeleton CloudFormation templates are stored in Service Catalog as products where they can be launched by users or other Service Catalog products

How it works

An organization might have various Applications and Model Skeletons.

- Containerized App Pipeline Skeleton – Defines stages for a containerized application’s building, testing, and deployment process

- Serverless App Pipeline Skeleton – Defines stages for a serverless application’s building, testing, and deployment process

- Model Pipeline Skeleton – Defines stages for moving a ML model through validation, training, and then deployment

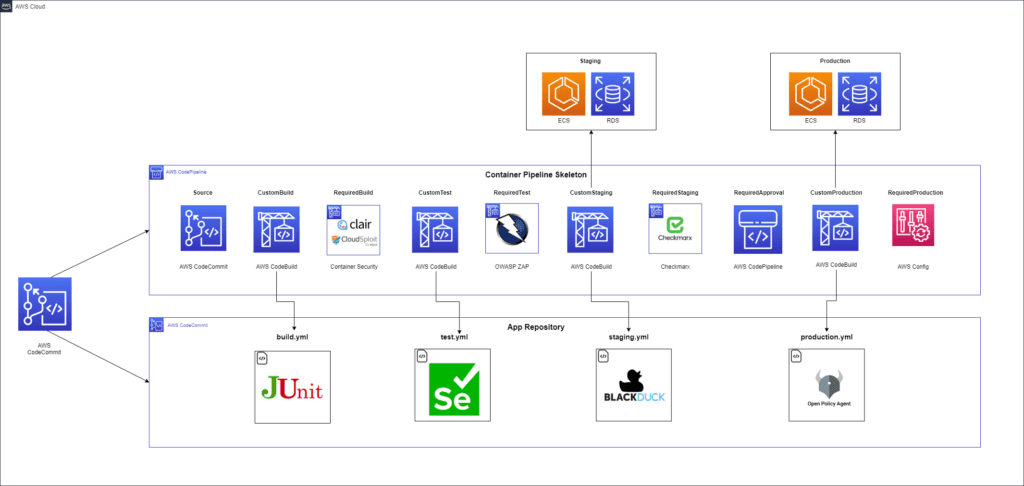

A Containerized App Pipeline Skeleton consists of different stages for deploying a containerized application.

- Source: Pulls code from the application’s Git repository containing the application code, infrastructure code, configuration files, etc.

- CustomBuild: Executes the steps defined in the repository’s build.yml to build the application, run unit tests, static analysis, and then deploy to the container repository

- RequiredBuild: Executes the organization’s required SAST security tests

- CustomTest: Executes the steps defined in the repository’s test.yml to deploy into the acceptance environment and runs integration and functional tests

- RequiredTest: Executes the organization’s required DAST security tests

- CutomStaging: Executes the steps defined in the repository’s staging.yml to deploy into the staging environment and run long running and exploratory tests

- RequiredStaging: Executes the organization’s required IAST security tests

- RequiredApproval: Executes the organization’s required approval gate

- CustomProduction: Executes the steps defined in the repository’s production.yml to deploy into the production environment often with a blue-green model

- RequiredProduction: Executes the organization’s required post production deployment steps

Blueprint

Blueprint/Skeleton Contains a CloudFormation template for a function-specific CI/CD pipeline which will become a Service Catalog product to be used by other users or products

Orchestrators

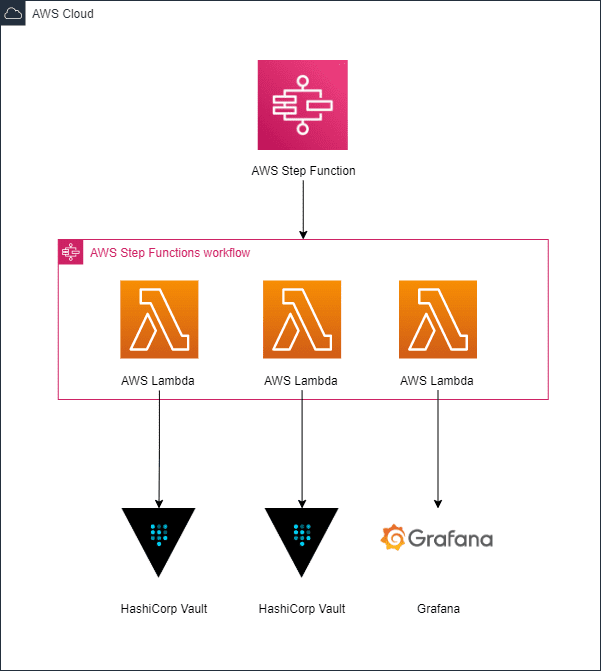

As the complexity of pipelines increase and organizations want to incorporate more automation, there are situations where multi-step, out-of-band setup steps must be performed before a pipeline can be deployed. For these requirements, an Orchestrator is used. Orchestrators are deployed and can be triggered by new pipeline instantiations.

Components

- Step Function – Orchestration logic is defined using a Step Function

- CloudFormation – Orchestrator Step Functions are defined using CloudFormation

- Service Catalog – Orchestrator Step Functions are stored as products in Service Catalog and may be launched by users or other Service Catalog products

How it works

An application that deploys to a Kubernetes cluster could have various out-of-band steps:

- Kubernetes Cluster Namespace

- Setup Secrets & Parameters

- Registering an application with an internal compliance service

If the Orchestrator Step Function workflow fails for any reason, the entire pipeline product deployment halts.

Blueprint

Blueprint/Orchestrator: Contains Step Function Service Catalog products that help automate out-of-band tasks that cannot be handled within a typical CI/CD pipeline.

Pipeline Products

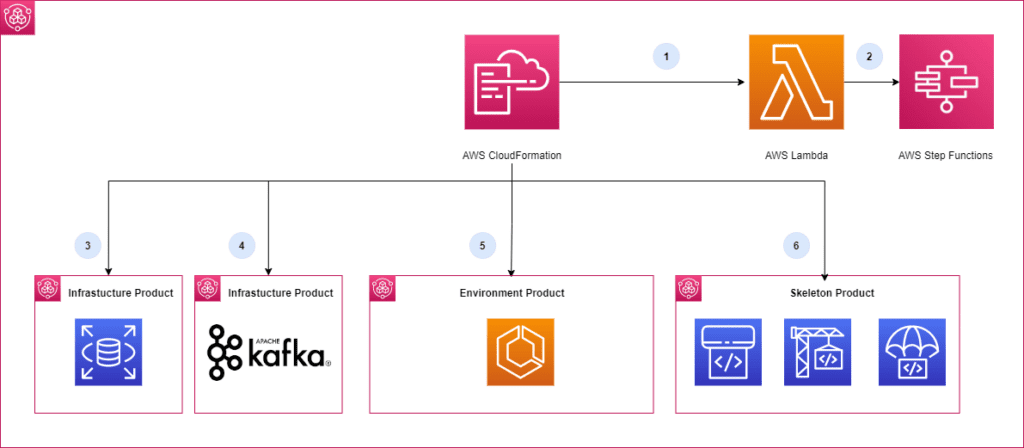

Pipeline products provide development teams a ‘one-click’ mechanism to get a fully provisioned pipeline. Pipeline products take care of the setup and configuration of the stages, various environments, infrastructure, and coordination of third-party services.

Components

- CloudFormation – Pipeline products are defined using CloudFormation

- Custom Resources – Custom Resource within the CloudFormation template is used to trigger the orchestrator Step Function

- DependsOn – The CodePipeline resource within the CloudFormation template would contain a DependsOn statement that ensures the Step Function executes successfully before proceeding with the deployment.

- Service Catalog – Pipeline products are stored as portfolios in Service Catalog and may be launched by users

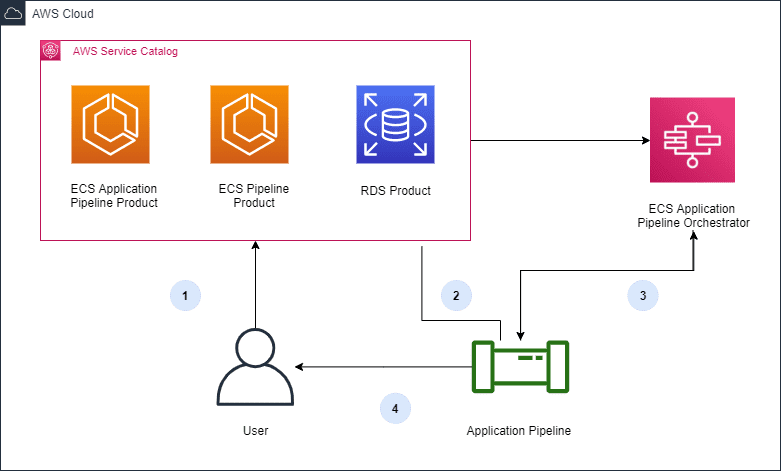

How it works

Pipeline products are basically aggregates of skeletons and orchestrators along with various infrastructure and environment products.

- User requests a pipeline products through Service Catalog

- A CloudFormation custom resource triggers the Orchestrator Step Function to perform the setup for all the necessary prerequisites for the application pipeline

- Infrastructure, and Environment Service Catalog products are launched

- The Skeleton Pipeline Service Catalog products are launched

Blueprint

Blueprint/Products contains pipeline products that combine other products, skeletons, and a lambda to call the orchestrator in order to fully configure the pipeline.

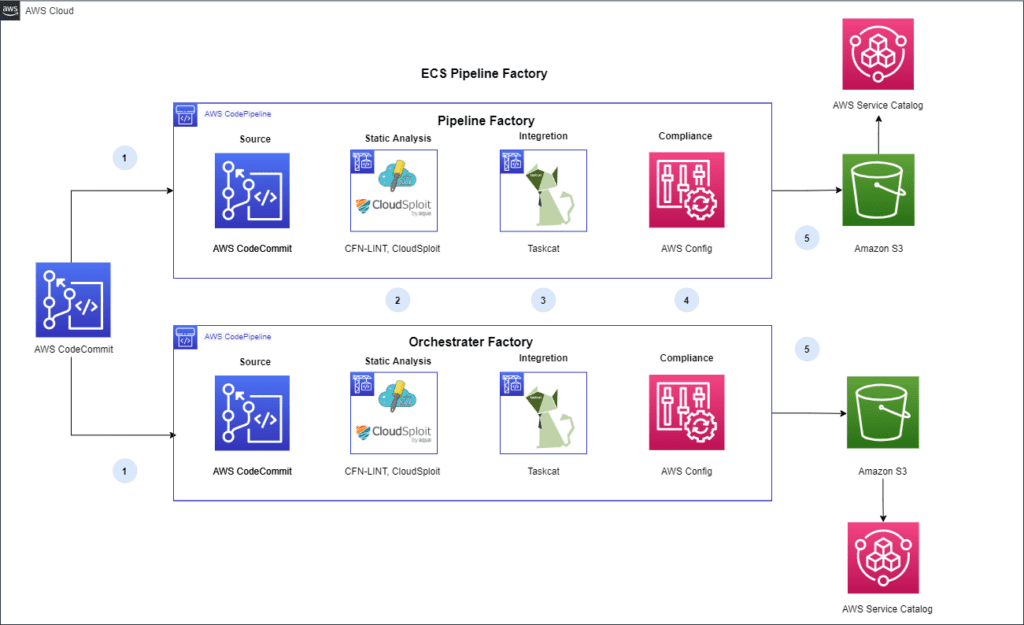

Pipeline Factories

Pipeline Products can be put through their own deployment pipeline where testing and analysis can be done to ensure they align with organizational policies. When the products clear the pipeline stages, they are deployed to Service Catalog.

Components

- CodeCommit – Stores pipeline code

- CodePipeline – Used to define the structure of the pipeline

- CodeBuild – Used to execute the tests against the pipeline templates

How it works

Skeletons, Orchestrators, and Pipeline products all are stored git repositories and have pipelines that test and validate that they comply with organization policies.

- Commit: Pulls the CloudFormation template from its version control repository

- Proactive Security Check: cfn_lint Validate CloudFormation yaml/json templates against the CloudFormation spec and additional checks. Includes checking valid values for resource properties and best practices.

- Proactive Integration Testing: Taskcat deploys the AWS CloudFormation template in multiple AWS Regions and generates a report with a pass/fail grade for each region

- Reactive Security Check (Optional): The CloudFormation template is executed in an isolated AWS Account and AWS Config rules are used to check the built resources for compliance.

- Deployment to Service Catalog: The Service Catalog Product is deployed to Service Catalog, a Service Catalog Portfolio is created with the Product, and access is granted to defined IAM users. Now the infrastructure product is ready for development teams to consume.

Blueprint

Blueprint/Deploy contains the necessary scripts and CloudFormation templates to automate the deployment and delivery of these self-service Pipeline products.

Benefits

- Pipelines continually developed upon with their own Software Delivery Life Cycle (SDLC) which improvers developer experience

- Pipelines are pre-built with connections to all services they require

- Pipelines provided via self-service

- Pipelines are codified to adhere to corporate standards and continually tested as changes occur

End Result

The end result is a self-service method for development teams to request pipelines that are delivered in a structured manner that allows for flexibility inside defined constraints. Skeletons, Orchestrators, and Pipeline products are managed by a DevOps team and have their own backlog of features that development teams request. Development teams are able to work with autonomy while the necessary governance controls are applied.

- User requests a pipeline by launching the Service Catalog pipeline product

- The pipeline product launches other products that are needed for the pipeline

- The orchestrator is called to perform any out-of-band activities

- The fully provisioned and configured pipeline is provided back to the user for use

Interested in learning more?

If you are looking to provide automation, consistency, predictability, and visibility to your software release process contact us today.