About the Customer

The Customer is an American multinational financial service corporation. It is one of the largest asset managers in the world with trillions of dollars in assets under management. The Customer operates a brokerage firm, manages a large family of mutual funds, provides fund distribution and investment advice, retirement services, Index funds, wealth management, cryptocurrency, securities execution and clearance, and life insurance.

Key Challenge / Problem Statement

The Customer had a list of approved and non-approved AWS services that may be used within their AWS Organization. Access to these AWS services was applied as Service Control Policies (SCPs) for each Organizational Unit (OU). If an AWS service was added or removed from the approved/non-approved AWS services list, each OU’s SCP was manually updated. This method of manually updating SCPs for each OU (50+ OUs) could take over an hour and was prone to errors. These problems could lead to potential security issues if SCPs were not properly configured or were not deployed in a timely and responsive manner.

Additionally, to practice the principle of least privilege, access to individual AWS services within each OU was governed by an Allow/Deny SCP. AWS services were added to or removed from this SCP based on usage data pulled from AWS Access Reports; if a particular AWS service was not being used within the OU, access to that service was denied. A process was also needed to validate that adding an AWS service to the deny SCP would not cut off access to users that were indeed actively using the service. Manually cross-checking service usage with active IAM permissions was extremely time-consuming and prone to false positives and other general errors.

The customer was looking to increase agility, increase their ability to scale, and reduce overall errors. The challenges faced in those key three areas include the following:

- Agility – The process was initially manual and deploying changes across multiple OUs took hours.

- Scalability – The same process needed to be repeated for every OU that currently existed and might exist in the future. At 50 OUs large, this was already a problem, and would become worse as they continued to scale.

- Error Rates – Manually performing validation by cross-checking roles and services could result in manual errors and false positives that could negatively affect applications across the entire organization.

Vertical Relevance was tasked with automating the entire process, from code check-in to SCP deployment, with a focus on increasing the speed of deployment and reducing errors caused by manual steps, thus improving the overall security of the customer accounts.

State of Customer’s Business Prior to Engagement

Access to specific AWS services was managed by Allow/Deny SCPs on an OU-to-OU basis. To determine which AWS services should be blocked in each OU, the security team had to query AWS Access reports and check whether a service had been accessed and, if so, whether the access was authorized. Since AWS Access reports could give false-positive results (users accidentally navigating to a service but not actually using it), OUs would often be allowed access to AWS services that they did not use, which violates the principle of least privilege and would increase the blast radius if an IAM entity was breached. Additionally, since they were relying on manual processes, there was significant lag time between when the access reports were run and SCP changes were deployed.

Proposed Solution & Architecture

Vertical Relevance built a CI/CD pipeline to allow the Security Team to efficiently create and manage SCPs and run them through automated tests at different stages of the pipeline before automatically deploying the changes. To eliminate the time-consuming and error-prone manual processes, the pipeline included a stage that ran automated tests to perform an analysis of proposed SCP changes and their effect on AWS service access. In addition, 2 IAM permissions boundaries were developed that would make delegated IAM roles more secure.

DevOps Pipeline Automation

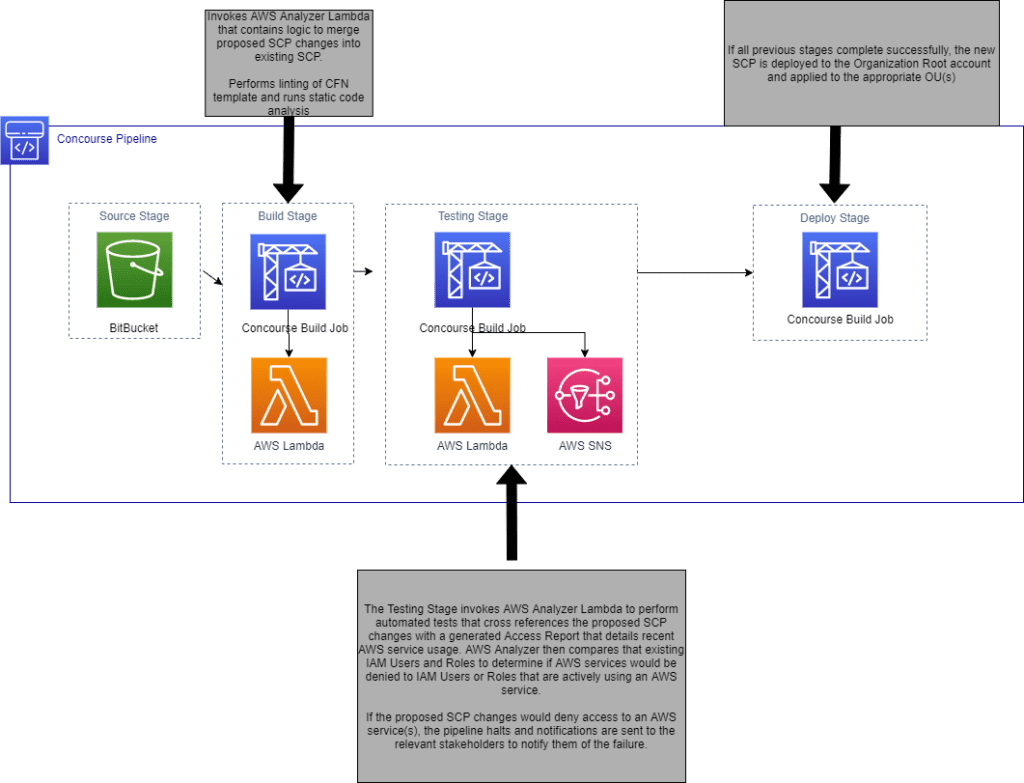

The fully automated pipeline was defined in code which allowed the customer to rapidly deploy SCP changes across a wide (and growing) number of OU’s. The pipeline, as defined in CloudFormation, consisted of validation, testing and deployment stages. The execution of the validation and testing stages were fully automated via a Lambda that leveraged the AWS Analyzer Python library. A separate pipeline was defined in CloudFormation and included the following stages:

- Build Stage: Automatically handled static analysis and unit testing of the Python code, stored the IaC artifacts, packaged the Python code and prepared it for deployment.

- Test Stage: Deployed the Lambda to a staging environment and conducted automated integration tests.

- Deployment Stage: Deployed the newest version of the Lambda to the production environment via a Blue/Green release model. If all integration tests are successful, traffic is incrementally shifted (1% per second) from the blue Lambda to the green Lambda. By utilizing CloudWatch metrics and CloudWatch alarms, if error rates on the green Lambda begin to spike before 100% of traffic has shifted to it, a rollback can be initiated and traffic will be shifted back to the blue Lambda.

The validation stage of the SCP deployment pipeline was responsible for pulling in the proposed changes to the SCP, running automation scripts to merge those changes into the existing SCPs, and providing an updated CloudFormation template as output. Finally, the new CloudFormation template is linted to ensure that it does not contain any syntax errors, and static code analysis is performed to ensure that the template does not violate any security or compliance requirements.

Upon successful validation of the CloudFormation template, the pipeline proceeds to the testing stage. Here, automated scripts are used to test how the proposed changes would affect existing users and roles within the OU. These scripts automate what originally was a time-consuming and error-prone manual process. Due to the automated nature of the validation and testing phases, if either of the stages fail for any reason, the pipeline will halt and alert the appropriate stakeholders of the failure. In this way, we prevent the pipeline from continuing to deploy the new SCP unless it has successfully passed the testing stages.

If no issues are found during the testing phase, the pipeline proceeds to the deployment stage where the SCP is deployed to the Organization Root account and applied to the appropriate OUs.

Access Analyzer

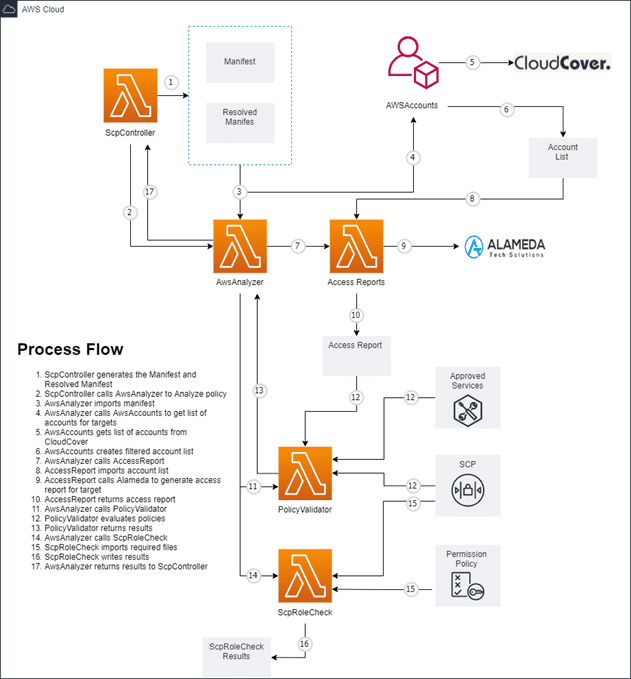

To perform SCP analysis, all changes to individual OU’s SCPs are centralized in a single GitHub repository and a manifest file within the repository was leveraged to track SCPs and the OU(s) in which they are deployed. Each time a pull request is merged into the Master branch, an automated pipeline triggers and kicks off the process of gathering all the changes made to the SCPs. These changes are cross-referenced with the manifest file to generate a “resolved manifest” that is divided into 4 sections – Create, Add, Update, and Remove. The names and paths of the SCPs with their deploy targets are contained within these four sections.

To determine if any of the proposed SCP changes would block access to AWS services that are currently in use, our script extracts the AWS account numbers and the paths of the SCPs that are to be deployed into the respective accounts. For each AWS account, the script generates an AWS access report. For each SCP that is to be added/updated for an AWS account, the script parses the SCP and determines whether each statement allows or denies an AWS service. This allow/deny list is then compared to the access report which outputs any instance where an SCP statement denies a service that is in-use. This process is repeated for each AWS account and the output is captured in a Python dictionary that is returned upon completion.

Once the analysis is complete, logic within the SCP deployment script is able to determine if the pipeline should proceed with deployment or not based on how many in-use services might be blocked. If the pipeline fails, the deployment of the SCPs is halted until a new pull request containing any fixes is merged to the Master branch of the repository. Automating the validation of the proposed new SCP allows the team to “fail fast” and remediate any errors founds quickly. Mean Time to Recover decreased by over 50% and overall Cycle Time decreased by ~250%.

Permission Boundaries

To complement the SCPs that were deployed into the AWS Organization, AWS and Vertical Relevance developed a pair of IAM permissions boundaries that would be attached to delegated roles within certain accounts. The first permissions boundary, DelegatedAdminPermissionsBoundary, limited the permissions of the role to only creating and managing IAM roles if a second permissions boundary was attached. The second permissions boundary, DelegatedDevPermissionsBoundary, bound the permissions of the role to only the AWS services and specific resources that needed to be managed. These permission boundaries provided a further line of defense against permissions being improperly applied, ensuring that the delegated roles could not create new roles or otherwise escalate their permissions beyond the boundary.

AWS Services Used

AWS Infrastructure Scripting – CloudFormation

AWS Management and Governance Services – CloudWatch, Organizations, Access Advisor, IAM

AWS Storage Services – S3

AWS Compute Services – EC2, Lambda

AWS Application Integration Services – SNS

Third-party applications or solutions used

- Concourse

- CloudCover

- Alameda

Outcome Metrics

- 50% reduction in Mean Time to Recover

- The previous process required the Security Team to manually cross-check roles with in-use services – this could lead to manual errors that could affect applications across the organization. Now, the customer has a consistent way in which AWS services can be validated each time they are added to or removed from individual OU’s Service Control Policies.

- The entire process from code check-in to SCP deployment is now 100% automated, drastically reducing human errors.

- Fully automated validation and testing allowed errors to be caught early and not make their way into deployment. Structuring the pipeline to first execute fast running validation tests followed by longer running integration tests, the customer was able to see a 50% reduction in their Mean Time to Recover (a metric used to track how long it takes a failed pipeline to “get back to green”). Errors caused by syntax mistakes or unit test failures allowed the developers to quickly rectify the problem without having to wait for the lengthy deployment process to first occur.

- 250% reduction in Cycle Time

- The previous process was manual and the end-to-end process could take several hours. Automation brought that time down to less than 15 minutes.

- By automating the testing and validation of the SCPs within the pipeline, the customer was able to reduce their Cycle Time by more than 250%.

- The customer now has a consistent way to quickly and reliably create multiple SCPs for OUs across their Organization.

- 100% Automation of SCP Deployment via Pipeline

- Prior to engaging with AWS and Vertical Relevance, Cycle Time increased in a non-linear fashion as more OUs were added to the Organization; the manual validation and testing required with each new OU outpaced the available resource pool.

- By eliminating all manual processes and automating 100% of the SCP deployment process, the customer both reduced their cycle time and observed no significant increase in cycle time as they scaled upwards and added more OUs.

Lessons Learned

Thinking holistically and taking an automation-first approach goes a long way. By considering your current infrastructure designs and imagining what a future workload would look like running on that infrastructure, you can avoid implementing manual processes that will not scale with the workload. Removing each manual step in the SCP deployment process required a great deal of upfront coding but will ultimately pay off in spades once the customer starts to roll out this solution across its entire organization.

Summary

By engaging AWS and Vertical Relevance, the customer was able to automate their deployment of SCPs across their AWS Organization. After implementing a multi-stage pipeline that performed automated checks to ensure that SCPs were compliant with security policies and did not contradict service usage across their accounts, the customer could quickly and reliably make changes to SCPs. With these changes in place, the Security Team updated organization-wide security controls quicker and with more confidence, which drastically improved the overall security posture of the customer.