Overview

In the high-stakes arena of modern enterprise software development, organizations are racing against time, pushing the boundaries with diverse teams, cutting-edge practices, and revolutionary technologies to roll out updates at breakneck speeds. In this relentless pursuit of innovation, there also lies a lurking potential danger for catastrophic bugs to slip through the cracks, undetected in the whirlwind of rapid deployments. These hidden threats can spiral out of control, turning into ticking time bombs that, if not defused promptly, could wreak havoc on production systems, leading to dire consequences. The cost of inaction? Escalating repair expenses, eroded user trust, and tarnished reputations. The last line of defense against this looming disaster is regression testing – a critical safeguard designed to rigorously interrogate every update, ensuring the applications fortitude is unbreachable. In the high wire action of software development, it is the indispensable safety net that keeps disaster at bay.

Forward-thinking organizations, recognizing the perils of unchecked deployments and the excruciating tedium of manual testing, are now embedding automating regression testing deep within their deployment pipelines. This strategic move allows for a swift and efficient evaluation of the application’s integrity immediately after updates are released to the wild. The result? A rapid response system that delivers instant feedback on any issues, enabling teams to react quickly to neutralize issues.

However, crafting a robust regression testing solution suitable for enterprise is no small feat, especially in the era of distributed architecture. The building of microservices through cloud technologies further complicates the matrix, introducing a diverse array of development stacks and resources dedicated to different layers of the application. From the user interface to the platform API’s, the backend processes, and database systems, the pressing challenge becomes finding a unified method to seamlessly consolidate and orchestrate the right test framework for each layer. This harmonization is crucial for ensuring that every component, no matter how small or specialized, undergoes rigorous scrutiny, safeguarding the application integrity in its entirety.

Addressing this challenge head on, Vertical Relevance created the Automated Regression Testing Framework, an innovative solution tailored to the complexities of testing modern intricate applications. This orchestration framework serves as a central hub, simplifying the integration of diverse testing tools, which accelerates the adoption of thorough regression testing strategies. It aims to standardize the regression testing ecosystem, ensuring it integrates smoothly into the development workflow.

Prescriptive Guidance

To lay a solid foundation for understanding the Automated Regression Testing Framework, it’s crucial to first familiarize ourselves with key terminology and best practices associated with automated regression testing. This preliminary step will not only enhance comprehension but also ensure a more meaningful engagement with the components of the framework.

Definitions

- Regression Testing – In the software development lifecycle, regression testing is the execution of a comprehensive test suite of functional and non-functional tests after any changes to the application have been introduced. Distinctly, the act of regression testing takes place after new code changes are deployed to the live application. This process is to ensure that recent updates or modifications do not adversely affect the existing functionality of the application.

- Functional Testing – When testing software, functional testing is that which evaluates the software system against a specific set of expected outcomes. The purpose is to ensure that the components of the application such as the interface, APIs, databases, and network communication are all delivering the business functionality correctly. Functional testing focuses on operational behavior and common types include unit testing, integration testing, and acceptance testing.

- Non-Functional Testing – When testing software, non-functional testing focuses on the end-user experience and operational aspects of a software application rather than the specific core functionality of its components. This type of testing evaluates the system’s overall attributes, ensuring it meets the predefined requirements for performance, usability, and reliability.

- Test Framework – A test framework is a set of guidelines, tools, and processes designed to facilitate the testing of software applications. It provides a structured environment in which test suites can be developed, executed, and managed. A test framework includes libraries for writing test scripts, a way to organize and execute tests, and mechanisms to validate and report results. Frameworks can allow developers to write test suite scripts in various programming languages, such as Java, C#, Python, and Ruby. These suites can range from simple actions, like calling APIs, clicking buttons, and entering text into forms, to more complex scenarios, such as navigating through web pages and handling dynamic content.

- Test Suite – A test suite is a defined series of test cases that verify a specific feature set or business workflow behaves as expected. Test Suites also consist of predefined environment configurations, scripts that detail the specific procedures of each test, and a defined set of outcomes to match.

- Test Case – A test case is a single set of instructions and expected results in which to validate the functional or non-functional outcomes of a feature within the software.

- Code Coverage – In software testing, code coverage refers to the amount of application code that is used or “touched” by tests. This measures how thorough testing is by looking at the parts of code being tested to ensure everything is functioning as expected.

- Re-emergence – In regression testing, “re-emergence” happens when old bugs, thought to be fixed, pop up again in a newer version of software. This could be due to the latest updates bringing back old problems or because the bugs were not fully fixed initially. Re-emergence can point to issues in how software is developed and tested.

- Defect Leakage – In software testing, defect leakage refers to the scenario where bugs go undetected until the later stages of the product release. Organizations strive to minimize defect leakage as it can result in the costliest repair.

- Regression Defect – A regression defect is a type of bug that appears after changes have been made to a part of the software. These bugs specifically arise in portions of the software that were previously working fine.

- Continuous Integration – Continuous Integration is the practice in software development where code changes are frequently merged into a central repository. This process automatically triggers builds and tests to run ensuring the recent changes integrate smoothly with the existing code.

- Build – In the software development lifecycle, a “build” refers to the process of converting source code files, fetching dependencies, and packaging it all into a runnable software application ready for deployment.

Automated Regression Testing Strategy

Embarking on the journey of automating regression tests can significantly alleviate the burdensome task of manual testing, transforming it into a more streamlined and manageable process. However, the challenge of sustaining an enterprise-level regression test suite, potentially comprising over 50,000 or even 100,000 test cases, cannot be underestimated. This section offers a comprehensive strategy designed to guide the creation and maintenance of an extensive automated regression testing suite, ensuring its effectiveness and scalability within the demands of modern software development.

The PACT Model

Defining and Selecting Automated Regression Tests

In the context of developing an effective automated regression testing strategy, the PACT model offers a structured approach, breaking down the process into four key components: Prioritize, Associate, Calibrate, and Track. Each element plays a crucial role in ensuring the strategy is both comprehensive and efficient.

Figure-01

Prioritize: This step involves identifying the most critical areas of the application that require testing. By prioritizing test cases that touch application modules with high traffic, critical features, or complex algorithms, teams can focus their efforts on creating an initial pool of test suites that can be automated with feature updates. This prioritization ensures that resources are allocated effectively covering the high-risk areas first.

Associate: Association involves the linking of existing test cases with specific requirements or areas of the application. Test cases can be categorized and sub-categorized by the module or business functionality they are associated with. This process facilitates traceability, making it easier to determine which parts of the application are affected by changes, thereby streamlining the process of selecting relevant tests for each regression cycle. Organizations leverage a variety of methods to identify the business entity owning an application module likely for cost purposes. These identifications can be associated with test cases through tags to highlight upstream and downstream dependencies and allow for those tests to be targeted when a dependent service is updated.

Calibrate: Calibration refers to the continuous evaluation and adjustment of test cases to ensure they remain effective and relevant. This includes reviewing test cases for accuracy, updating them to reflect changes in the application or pruning them altogether if no longer applicable. Upon failure, tests should be measured for validity prior to requesting application fixes. The goal here is to ensure quality of tests over quantity and suppress noise as quickly as possible. Calibration ensures that the test suite evolves in tandem with the application, maintaining its effectiveness over time.

The diagram (Figure-02) represents the 4R’s of quality regression tests. This chart should be used as guidance when writing and reviewing regression tests.

Figure-02

Track: Tracking involves the monitoring of execution of test cases and recording their outcomes. This includes tracking which tests were run, when they were run, their results, and any issues encountered. The primary goal of automating regression tests is to decrease the feedback loop time. A solution should be put in place to make failure obvious and loud. Effective continuous tracking provides valuable insights into the health of the application over time, identifies trends in test results, and helps teams to pinpoint areas that may require additional attention.

Best Practices / Design Principles

Tests As Code

The “test as code” approach, where tests are written and maintained as plain code aligns seamlessly with principles of infrastructure-as-code (IAC), enabling tests to be version-controlled, reviewed, and integrated into the continuous integration and deployment pipelines just like application code. The adoption of “tests as code” promotes greater precision and flexibility, allowing developers to leverage the full power of programming languages and IDEs to write more expressive and comprehensive tests. Moreover, this approach significantly enhances the extendibility of test frameworks, as code-based tests can be easily refactored, extended, and reused across different scenarios and projects. Teams can ensure a high degree of automation and integration in their testing processes, leading to more reliable, maintainable, and scalable testing practices that keep pace with rapid development cycles and evolving application architectures.

Deployment Pipeline Integration

Within the deployment pipeline, it is imperative that regression testing is initiated automatically as a subsequent phase following a successful deployment. Upon the introduction of changes into the environment, an automated mechanism should be in place to trigger the execution of tests, thereby validating the application’s integrity. Should any tests not meet the expected criteria, these failures must be promptly reported, with relevant stakeholders receiving immediate notification.

Test Suite Portability

In the context of designing effective regression testing strategies, it is essential to construct test suites with portability in mind. This entails developing test cases that leverage environment variables for referencing dependencies specific to the hosting environment, thereby enhancing adaptability. Moreover, incorporating parameterization within test suites is crucial, as it facilitates the dynamic provisioning of environment configurations and easy specification of a target environment. Leveraging dynamic configuration in test cases ensures that regression tests are versatile enough to be applied across various states in the software development lifecycle, from non-production to production environments, therefor maintaining consistency and reliability.

Test Suite Prioritization

In the context of automating regression testing, running the full suite of end-to-end tests with each deployment is neither practical nor efficient. For improved efficiency, it’s recommended to organize tests into categories based on the business function and prioritize based on feature sets, business criticality, or user feedback. It’s important to recognize and categorize the dependencies upstream and downstream from a feature or bug fix to confirm the integrity of a particular application workflow.

Employing tags within a test suite can be a way to enable the identification of relevant test cases that need to be executed in response to updates or the introduction of new features. A single test case can be assigned multiple tags, allowing it to be part of both a narrowly focused subset targeting a specific workflow or a broader suite encompassing the entire application platform such as UI or API components.

Store Tests configurations with Application

Maintaining test configurations within the containing application repository is considered best practice in software development. This approach ensures clear visibility into the specific tests associated with the application, facilitating a more structured and organized testing process. Additionally, it empowers the teams responsible for the application to conveniently manage and update test configurations directly within an accessible repository. This ensures that the testing protocols remain closely aligned with the applications evolving requirements and can easily be picked up and applied in the CI pipeline.

Automated Regression Testing Framework

Vertical Relevance’s Automated Regression Testing (ART) Framework is tailored to orchestrate the automation of regression testing across multi-platform solutions all managed efficiently from the cloud. Different layers of an application may benefit from specific test suites built to address the unique testing requirements.

Components

- Step Functions – AWS Step Functions is designed to streamline and automate the coordination of different tasks and services used within an application. It acts as an orchestrator, allowing the creation, management, and visualization of workflows that integrate various AWS services and functionalities.

- Lambda – AWS Lambda is a serverless, event-driven compute service that lets you run code for virtually any type of application or backend service without provisioning or managing servers.

- ECS – AWS Elastic Container Service (ECS) is a fully managed cloud-based container orchestration service that simplifies the process of running, scaling, and managing containers within cluster.

- Fargate – AWS Fargate is a serverless compute engine for containers that simplifies the deployment, scaling, and management of containerized applications. Most importantly, it allows the execution of a container without managing the cluster abstracting the underlying infrastructure component.

- ECS / Fargate Task – In AWS Step Functions, a “Task” is a fundamental component that represents an individual unit of work within a workflow. This task is a specific action or operation needed to perform as part of the workflow. AWS Step Functions is capable of executing tasks that involve running a single container via AWS ECS or ECS Fargate.

- ECR – AWS Elastic Container Registry (ECR) is a fully-managed container registry service that provides a reliable and secure location for storing container images.

- S3 – AWS S3 is a object storage service offering industry-leading scalability, data availability, security, and performance. All security hub findings are delivered to and kept in S3 for analytics.

How it works

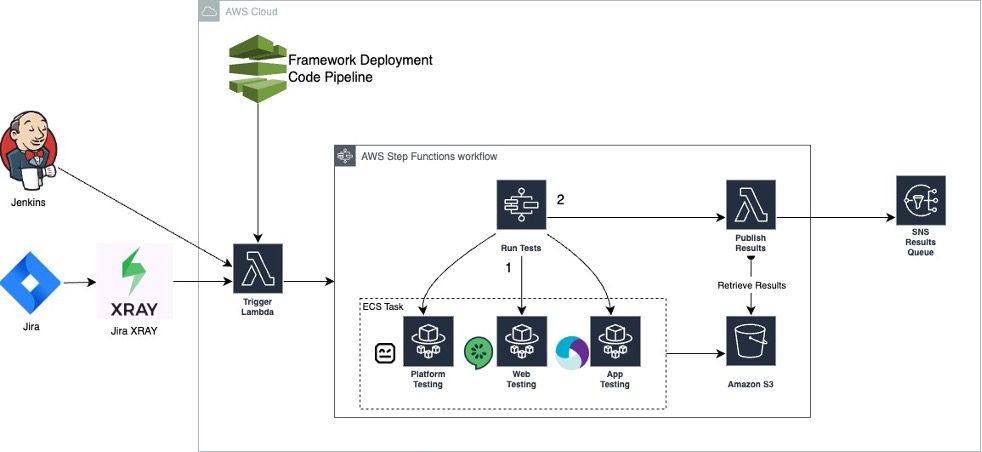

The solution follows a serverless architecture and leverages a container orchestrator. A “trigger” lambda serves as the entry point to the framework, validates the configuration file and kicks of the AWS step function. The AWS step function manages the parallel initiation of each of the testing suite containers and allocating the results from each test suite. The containers house and run the various test suites targeting or simulating the live application.

Automated Regression Testing:

Figure-03

Below is a detailed step-by-step walkthrough of the operational process for the automated regression testing framework.

- Create and Upload Test Suite Images – Test Suite container images must be created and uploaded to an AWS ECR repository.

- The images can include any third-party test suite so long as they adhere to the expectations of the framework by accepting a configuration payload, initializing the test suite, and outputting test results to the associated S3 bucket.

- Test suites must include all test cases to be selected and run against the application.

- To utilize the new test suite images, the AWS CDK code must be updated to target the image as resource in the framework.

- Invoke Trigger Lambda – The trigger must be invoked providing a test configuration document in the payload. The configuration document includes what test suites to run as well as properties needed for the individual test suites.

- The trigger lambda validates the config file for correct test suite references and parameters.

- The lambda can be invoked via AWS SDK easily integrable with third party services like Jenkins and Jira.

- Trigger Lambda Initializes AWS Step Function – The step function accepts the payload and initializes the specified tests suites in parallel.

- The Step Function waits for all the containerized test suites to complete execution before moving to the next step.

- Failed test suites will be reported but will not break the framework’s flow.

- When containers complete each result is pushed to an S3 bucket.

- Publish Lambda Allocates Results from S3

- After all containers have reported completion a follow up lambda grabs results out of S3 <Based on ID?>

- <Publish Lambda pushes to SQS>

Automated Regression Testing Framework in Action

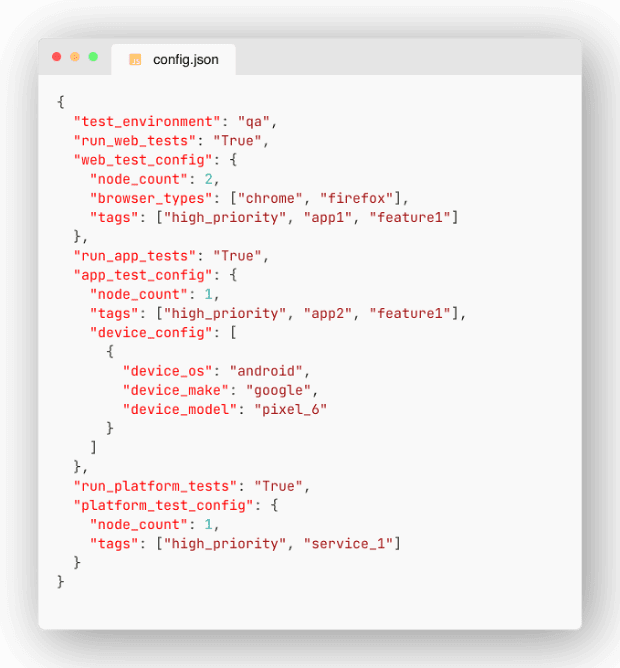

After exploring the components, architecture, and procedural steps of the Automated Regression Testing Framework, this section transitions to a practical example of running three parallel test suites. First the following configuration file must be produced to trigger the specific test suites.

Figure-04

In figure-04 multiple root properties are provided. Each key prefixed with “run” targets a test suite container to run based on its Boolean value. For example, `run_web_tests` prompts the framework to trigger the “web_tests” container. This specific container houses the third-party service selenium to run visualization and functional tests on a web application. When requested to run a service the framework also looks for a property using the naming convention `<container_name>_config` and forwards all child properties to the target testing container.

The AWS CLI tool can be used to invoke the initialization lambda providing the configuration file in figure-04.

The Lambda will trigger the AWS Step Function to then run the test containers.

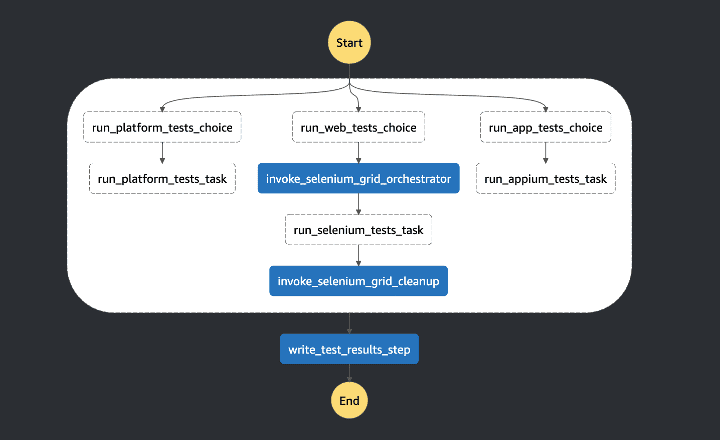

Figure-05

Figure-05 shows the web_tests container running. A final `write_test_results_step` will gather the results from S3 and post to the appropriate stream.

Blueprint

This section provides the blueprint for provisioning the Automated Regression Testing Framework orchestration solution.

- CDK Deployment – The AWS infrastructure and lambda code necessary to deploy and operate the Automated Regression Testing Framework

- Test Framework Docker Container – A sample test framework docker container deployable with AWS Fargate using CDK

Benefits

- Flexibility and Adaptation – The use of containerized tests suites enables support for a broad range of testing tools and methodologies, making it adaptable to different project requirements and technology stacks. This flexibility ensures that teams can use the best tools for their specific testing needs without being constrained by compatibility issues.

- Improved Collaboration – By centralizing test management, the framework fosters better collaboration among development, QA, and operations teams. Clear visibility into tests and results helps streamline communications and align efforts towards common quality goals.

End Result

In conclusion, the Automated Regression Testing Framework significantly streamlines the regression testing process, making it more accessible and swiftly adopted across entire organizations. By integrating the framework, there is a bolstered assurance that all distributed application solutions undergo comprehensive testing coverage in the continuously integrated software development process. Furthermore, it offers the flexibility to automate testing in a way that best suites the needs of the application module, moving away from the constraints of a one-size-fits-all approach. This adaptability not only enhances efficiency but also ensures that diverse solutions receive the meticulous testing they require, paving the way for more reliable and high-quality software deployments.